CS 154 Homework #8

Due Friday, April 8, 2005

Assignments for Week 8-9: (50 points)

Set!

[20 points -- due 4/8] as an introduction

to the some of the specialized visual processing that each team will

be working on, this problem asks each group to implement a program that

plays the card game, Set.

If you are not familiar with the game, there is an online

description of the rules

here.

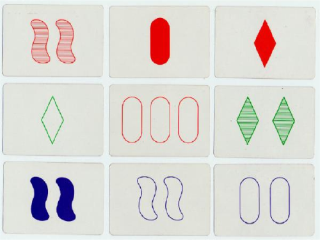

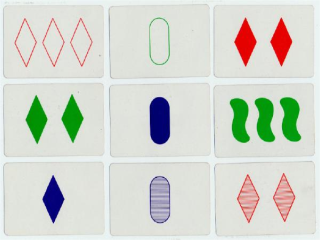

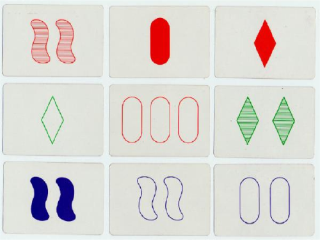

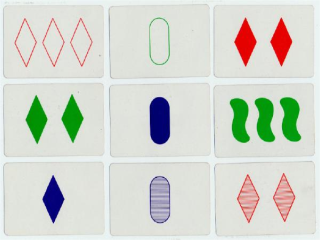

Here are two examples of set images (in Windows .bmp

format):

Your program should assume that there will be nine cards, laid out

horizontally in a 3x3 grid and should somehow indicate (on the cards

or in a text window) if there is a set and where that set is, if

present. Note that the hard part for the machine is the easy

part of this problem for humans (and vice versa)!

One approach to this is to model the foreground colors, find

connected components and their densitry, and then use a shape

statistic of some sort to determine the final attribute of

each card (this is where the creativity comes in...).

Final Project: part 1

[30 points -- due 4/8]

Each of the final projects requires a visual/image-processing component,

as well. For April 8, each team should update their website with

- a decription of the vision processing their system is

using

- several screen shots of it working (some not working are

also a great addition!)

- a link to their source code (probably just the main .cpp

file of the VideoTool project suffices here

Here is a rundown and a few comments on the vision-based goals

for each of the projects (some projects have more than one

group working on them). AIBOers, you're not forgotten, but

you've already checked out your goals -- and demonstrated the

first (but probably not the last) servoing algorithm of the term...

- Scavenger Hunt:

Recognizing an arrow and indicating the direction in which

it's pointing, relative to the image. I would suggest using two

easily-changeable routines for determining what the background

and foreground colors are for the arrow, and then finding all

of the components of the foreground color that are surrounded by

that background color. Following that, you might look for an axis of

symmetry (if the arrow itself does not turn) and then look for

which side of that axis of symmetry is "heavier" to get the direction

it's facing. If the arrow turns, that will take more work!

- Hide and Seek:

You have a very intriguing set of notes, including the impressive-

looking acronyms rMCL (reverse?) and pKrMCL (no idea). I think

your strategies for determining if the other bot is seen look good,

as well, though I'm interested in hearing what "by nerf" means and

how the wireless might be used. Tagging the robots with colored

smocks or hats and making sure they can be IDed will make a

good first goal.

- Extinguishers:

You'll need to start by trying out the motors and sensors and

then building a base. A reasonable goal for April 8th is to have a working

base that can wander (by redirecting after bumping into walls). Also,

you should have at least the basics of the flam-detection "vision"

working, where a light sensor or two locates the flame and then

the robot orients itself toward it.

- Big Brother:

I would start with the detection and tracking of one or two (probably

two) particular T-shirts: actually deciding that a T-shirt is of interest

might be asking a bit much. I really like the idea of running an MCL

filter on where the person is -- I would attack this after getting the

basic

wandering behind a person (T-shirt) to work by visually servoing on

the image position and size of the T-shirt blob.

- Mappers:

I would start by planning, for sure, but within the vision part of this

block (the next two weeks), I would also be sure that you can reliably

extract and recognize some artificial landmarks (there is lots of

construction paper of different colors in the lab) and -- if you'd prefer

to tackle the more difficult task of not using artificial landmarks --

some real ones (e.g., doors, fire alarms, something else). In addition to

determining the image location of whatever landmarks you will be using,

you will _need_ to have a routine that estimates the relative location of

the landmark with respect to the camera. It does not have to be perfect,

by any means, and can include a wide swath of uncertainty, but you will

need to characterize that uncertainty. My suggestion would be to

incorporate

a set of particles of the landmark's color on the map indicating a

distribution

of where it might actually be located, given its (1) size = area and (2)

centroid.

The x and y coordinates of the centroid are likely to be used very

differently!

Let me know if you run into difficulties with anything on this

part -- this is the more important of the two!