Monte Carlo Localization

Daniel Lowd

CS154: Robotics

Overview

I implemented the Monte-Carlo localization mostly as described, but

with a few modifications. First, in my normalization code, I

generated uniformly distributed random particles if for some reason not

every particle was assigned. (In my first implementation, I would

sometimes end up with a few particles at (0, 0), because they hadn't

been assigned new locations.) Second, I decided to determine and

display the average absolute and overall errors on the particles after

normalization. This way, I could get an objective sense of the

precision and accuracy of the code. I coupled this with a hack

that would place the robot automatically in four consecutive locations,

rather than relying on keyboard movement or mouse placement, to generate

statistics on the effect of different actual and modeled noise levels.

Since this was somewhat beyond the scope of the project, I did not

follow through to completion. I observed, for example, that the

accuracy and precision would vary from run to run, but I did not attempt

to describe that distribution, only gain some intuition into the effects

of the different factors. I did these experiments with the simple

square map using 100 particles and had pretty good success.

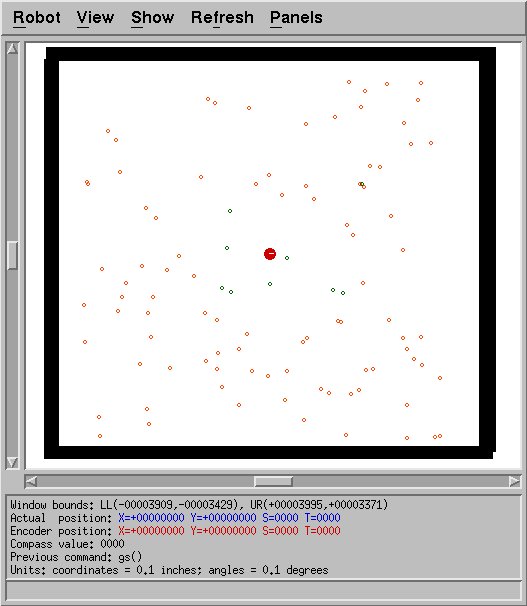

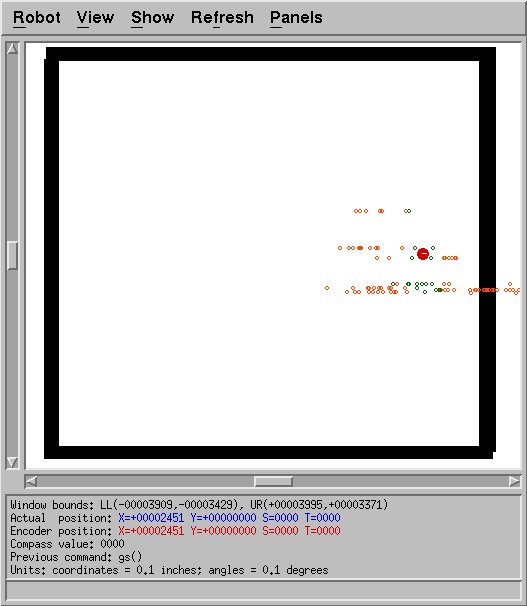

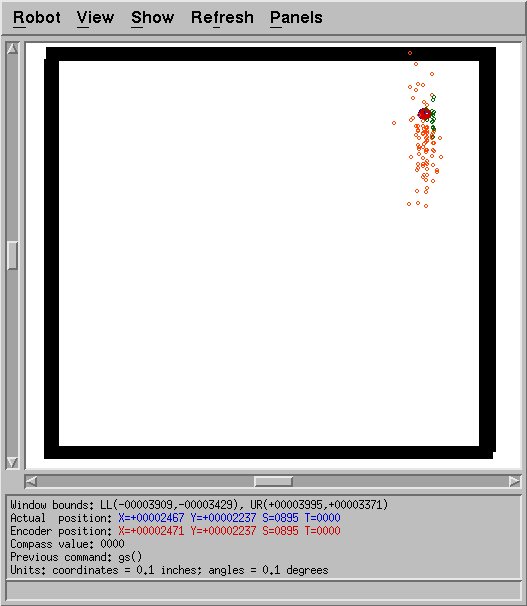

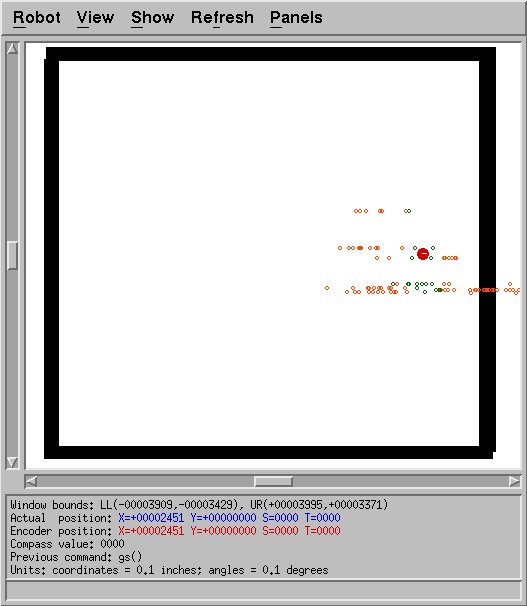

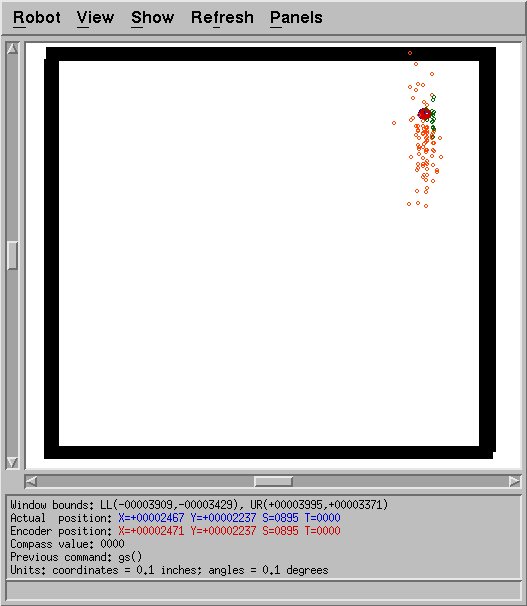

Qualitative Analysis

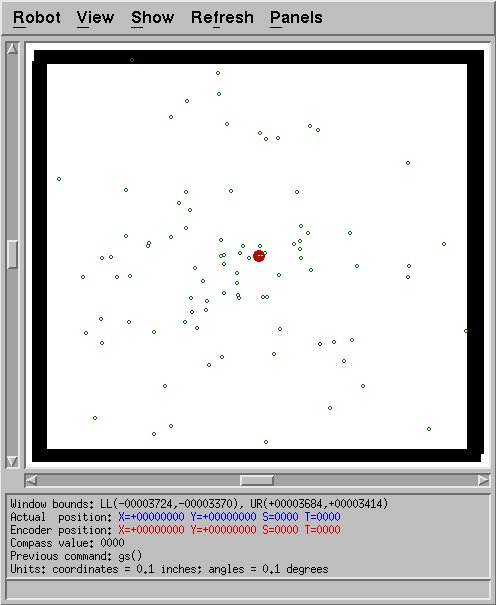

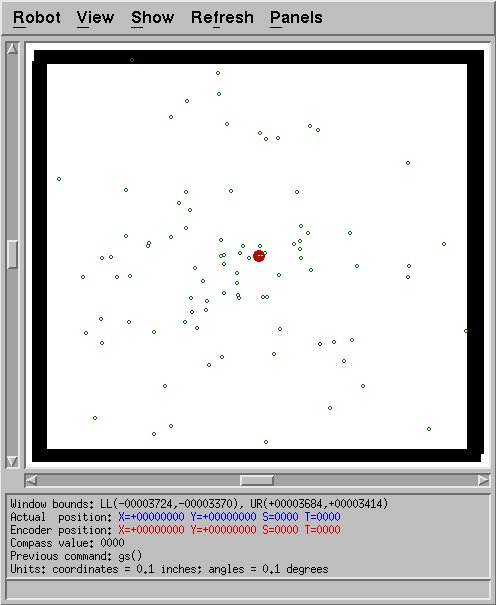

With actual and modeled noise of 10.0 inches (standard deviation), the

localization performs pretty well. The following screenshots show

some of the progression when running the robot in part of the loop, but

done by mouse. The darker colored circles are the particles

selected by the algorithm. The lighter colored circles are too

unlikely and thus being cut.

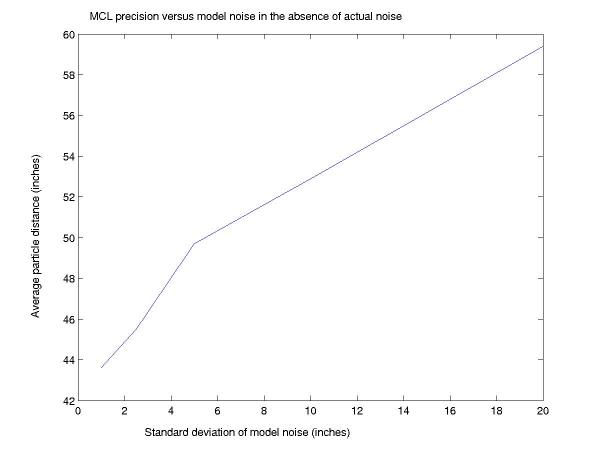

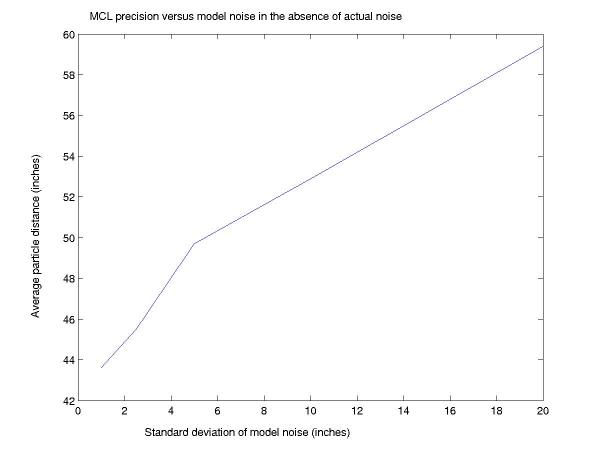

Quantitative Analysis

Through automated experiments, it was possible to gain a more precise

understanding of the relationship between precision and noise.

When there was no noise introduced to the sonars, less noise in

the model yielded more precision, when measured in terms of average

distance from the true location to each particle after a certain amount

of localization.

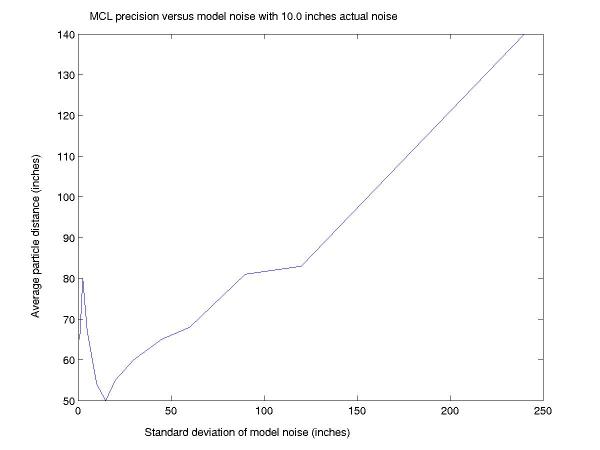

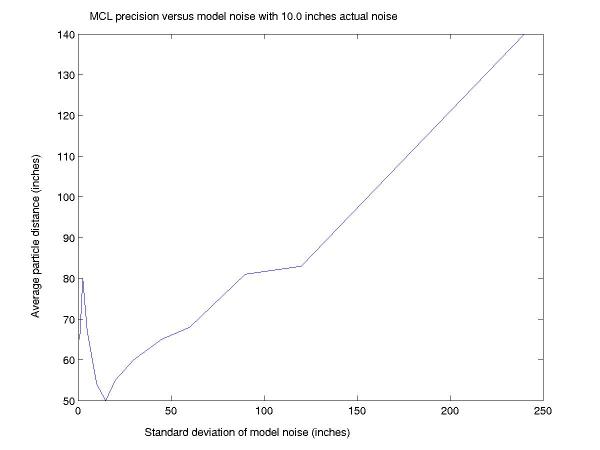

With noise of standard deviation 10.0 inches, the graph looks somewhat

different:

Here we can see that underrepresenting the noise has detrimental

effects, even in a simple box-like room. However, overrepresenting

the noise does lead to less precision, as before. It should be

noted that this is precision, not accuracy. Though not shown here,

accuracy tended to be very good for the case with no noise across all

variation of model noise. For the case with noise, accuracy was

good with noise modeled at the actual level of the noise or somewhat

greater, even up through 120 inches of noise. 240 inches was too

much to effectively localize, which makes sense, since the maximum sonar

value is 255.

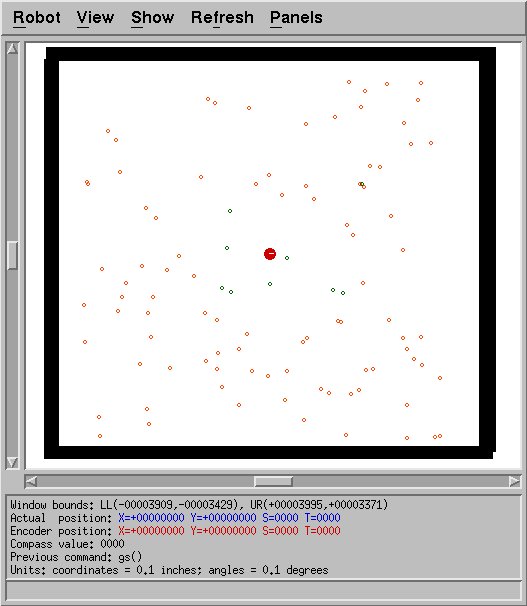

An example of this failure can be seen below. While the average

location of the particles is somewhat near the robot's actual position,

it's still pretty vague. More discerning localization is more

effective than this.

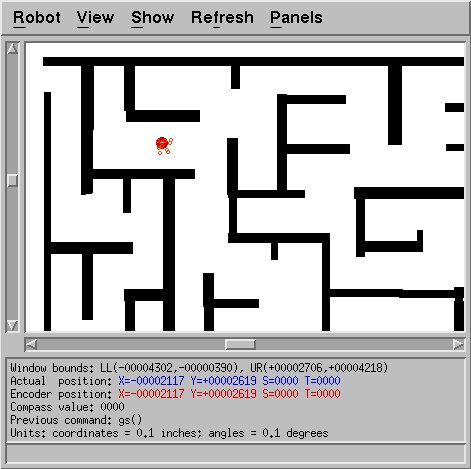

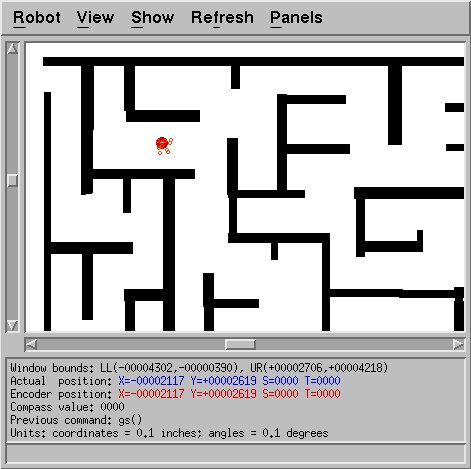

Maze Challenges

The maze map, unfortunately, proved to be much more challenging.

Given the complexity of this map, I did not attempt to do testing

as automatically or methodically as with the simpler one. Simply

to be able to consistently localize would be considered a victory.

It was only with lower levels of noise that I was able to manage

this. (Alternately, more particles might help, but I did not

experiment with that.) The following image shows an example of

successful localization, but just barely. 10 inches of model noise

but no actual noise were used, with 100 particles. Even with these

parameters, it nearly failed based simply on initial particle

placement. Of the nearby particles, only one ended up being

identified as useful, and most of the likely clumps of particles were

quite far away. It should also be noted that knowing where the

robot really is and letting that information influence the next

placement of the robot really is cheating, and probably makes this

method work better than it otherwise would. A true test would be

to implement entropy-seeking behavior or to place the robot in a random

location and show a human only the particles.

Source Code

mcl.cc -- Complete source code to my implementation

of the Monte-Carlo Localization code.