| Andrew Campbell | Janna DeVries | Erik Shimshock |

|

|

|

We took some screen shots of our run on Monday. The robot starts off simply knowing how far it is away from the wall, and manages to localize pretty well and quickly. The first two screen shots are the robot's initial understanding of where it is before moving. The other screen shot shows it localized very close to where it actually is (the drawn robot is actually incorrect). The code that does most of the work for MCL is Robot.cpp.

The MCL is pretty much working now. The algorithm is mainly implemented in 3 main functions: moving the hypothesis-particles based on the robot's movement, updating the hypothesis-particles' probabilities based on their simulated sensor readings and the sensor readings of the real robot, and then resampling the hypothesis-particles based on their probabilities. The sonar, IR, and camera are all incorporated into the model (and can be easily included or excluded from the calculations using built-in boolean flags). One fancy feature of the code is that all of the calculations for the sensors have been consolidated into one function which deals with all of the model specific details. Particles can be initially placed exactly with the robot's position and orientation, exactly with its position (but random orientation), randomly in the hallways, or randomly in the hallways but with a matching sonar reading.

I forgot to put up the final version of our wander client, which senses periodically as it moves and uses the center sensor as a bump sensor rather than a stair sensor (since we are not trying to localize near steps).

Erik has finished working on the MCL which is working pretty well. We've added functionality that makes the robot sense after a little bit of time rather than only when it hits something like we'd done originally. We also have some pictures, but my internet connection appears to be too slow to upload them. :( Hopefully I can get them up here in the next day or two.

Last night we worked a lot on getting MCL working. Erik worked mainly on the MCL side of things while Andrew and I (Janna) calibrated the sonar and encorporated vision and sonar into our wandering client so that it can sense not just with its IR but with those things too. We created a sense function that gets odometry, IR readings, sonar readings, and whether there is red molding and sends the information to the map tool. We then ran our robot in the hallway to make sure it was interfacing with Erik's updated MapTool alright and we got promosing results. We also took some video.

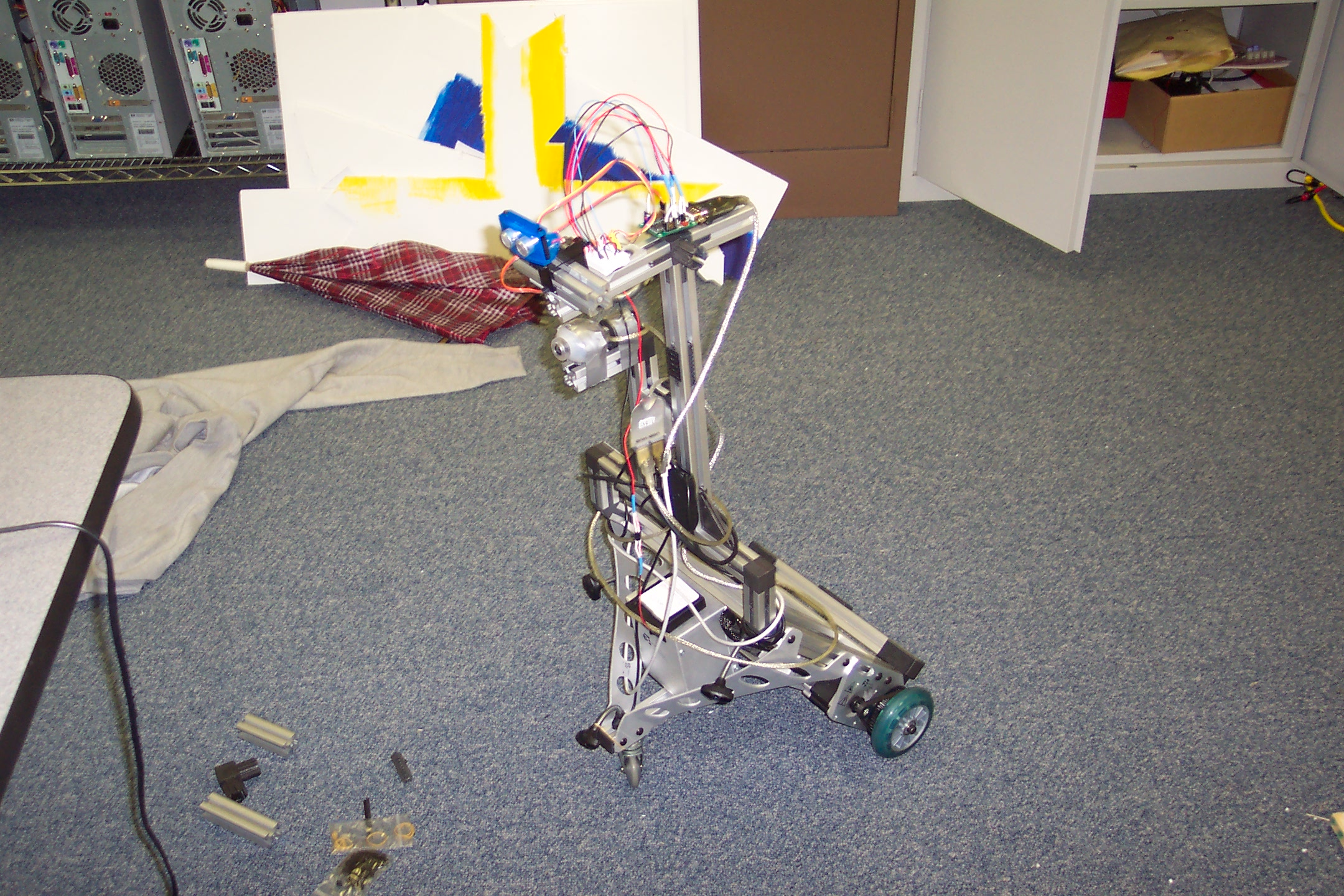

On Friday we built the sonar, and mounted it on the robot. On Sunday we started working on how to do wander. We came up with a finite state machine to represent our strategy. We then wrote a client that uses our strategy. We used only the IR sensors, not the sonar. We have three IRs, two at the two corners to detect walls and one in the center to detect a lack of floor -- this keeps our robot from accidentally falling down stairs (and may prevent it from taking too steep a grade too). We have a really nice movie that shows our robot detecting the stairs and avoidng them. We also have a log showing one good run we had, we will get screen shots soon.

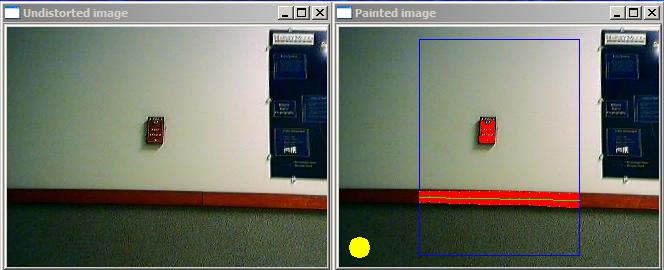

This weekend we continued working on red detection. We discovered that the reds all seemed to be close in S&V, but ultimately determined that that was not that useful. Our final detection of red relies on HSV and that R>G and R>B. We then decided to detect red molding by looking for the top and bottom of an area of red in a column and then fitting a line to the points we found. This yielded pretty good results, and is what we've decided on. We then determined whether we were looking directly at a red molding by seeing if the column in the middle of the picture detected red molding, and if we were able to fit a line to the rest of the molding. Whether we're looking straight at the molding is determined by a yellow circle in the bottom left-hand corner. Take a look at the code and screen shots.

This evening we finished creating the remote control program which Andrew had previously worked on. We have two modes for the program, one that moves continuously once an arrow is pressed, and one which moves one step. We figure the continuous movement will be more useful, but that it is a nice option to be able to one step motion as well.

We began working on the vision portion, seeing red, and had fairly good results at detecting red, especially when the scene is fairly well lit. We found that saturation and value should perhaps be connected -- i.e. when the scene is darker (with a smaller value) then we should allow smaller saturation values to be called red. We're not quite sure how to use this correlation yet, but it seems like it will be reasonably easy to do.

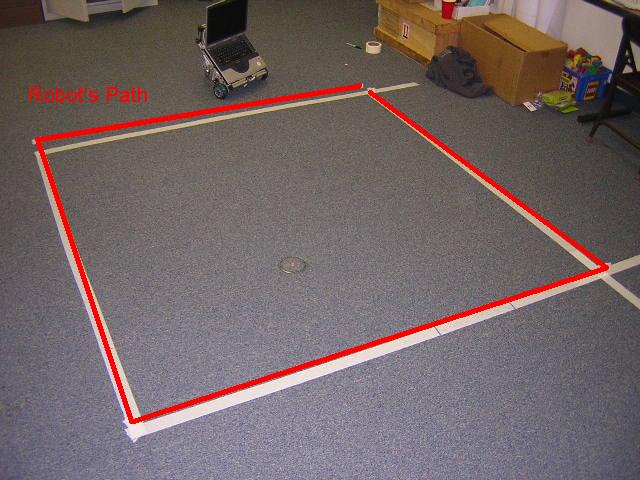

Today we soldered our connector, but we could not connect up our panbot because we could not find a handy board. We then began playing with our robot to get it to move 2 meters and turn 90 degrees. After having difficulties prediciting what input would give a turn closest to 90 degrees, we just went with assuming it really was radians and letting our experiment show the inacuracies. We only got 6 of the 10 runs done, and plan to finish the other 4 this weekend. Our turns appear to be very accurate, but the distance traveled was not. Also, our robot appears to list as it travels. From the first run, we generated a nice image of how the robot's path differs from the intended path.

When we started working, our first obstacle was turning on the monitor. Turns out that the plug for the monitor was broken, and switching a new one in made it work fine. We then found that we had no internet connection so we could not immediately download the necessary software. After finding that the IP address was incorrect and changing it, we got internet access and downloaded the software. We also had a few issues with the USB drive, but they seem to have been worked out after pointing the computer to a few .sys files. We then got in communication with our evolution, and got it to run. After playing around with the commands some we added IR sensors and tried to figure out the meaning of the numbers that was returned. We decided to put off writing our own client in C++ until thursday when we plan to get a laptop running so our evolution can actually move around.

It MOVES!

It MOVES!

Avoiding Stairs

Avoiding Stairs

Movie!

Movie!

Achieving Artificial Intelligence through Building Robots by Rodney Brooks.

Dervish: An office-navigating robot by Illah Nourbakhsh, R. Powers, and Stan Birchfield, AI Magazine, vol 16 (1995), pp. 53-60.

Experiments in Automatic Flock Control by R. Vaughan, N. Sumpter, A. Frost, and S. Cameron, in Proc. Symp. Intelligent Robotic Systems, Edinburgh, UK, 1998.

Monte Carlo Localization: Efficient Position Estimation for Mobile Robots by Dieter Fox, Wolfram Burgard, Frank Dellaert, Sebastian Thrun, Proceedings of the 16th AAAI, 1999 pp. 343-349. Orlando, FL.

PolyBot: a Modular Reconfigurable Robot Proceedings, International Conference on Robotics and Automation, San Fransisco, CA, April 2000, pp 514-520.

Robot Evidence Grids (pp. 1-16) by Martin C. Martin and Hans Moravec,CMU RI TR 96-06, 1996.

Robotic Mapping: A Survey by Sebastian Thrun, CMU-CS-02-111, February 2002

Robots, After All by Hans Moravec, Communications of the ACM 46(10) October 2003, pp. 90-97.

The Polly System by Ian Horswill, (Chap. 5 in AI and Mobile Robots, Kortenkamp, Bonasso, and Murphy, Eds., pp 125-139).