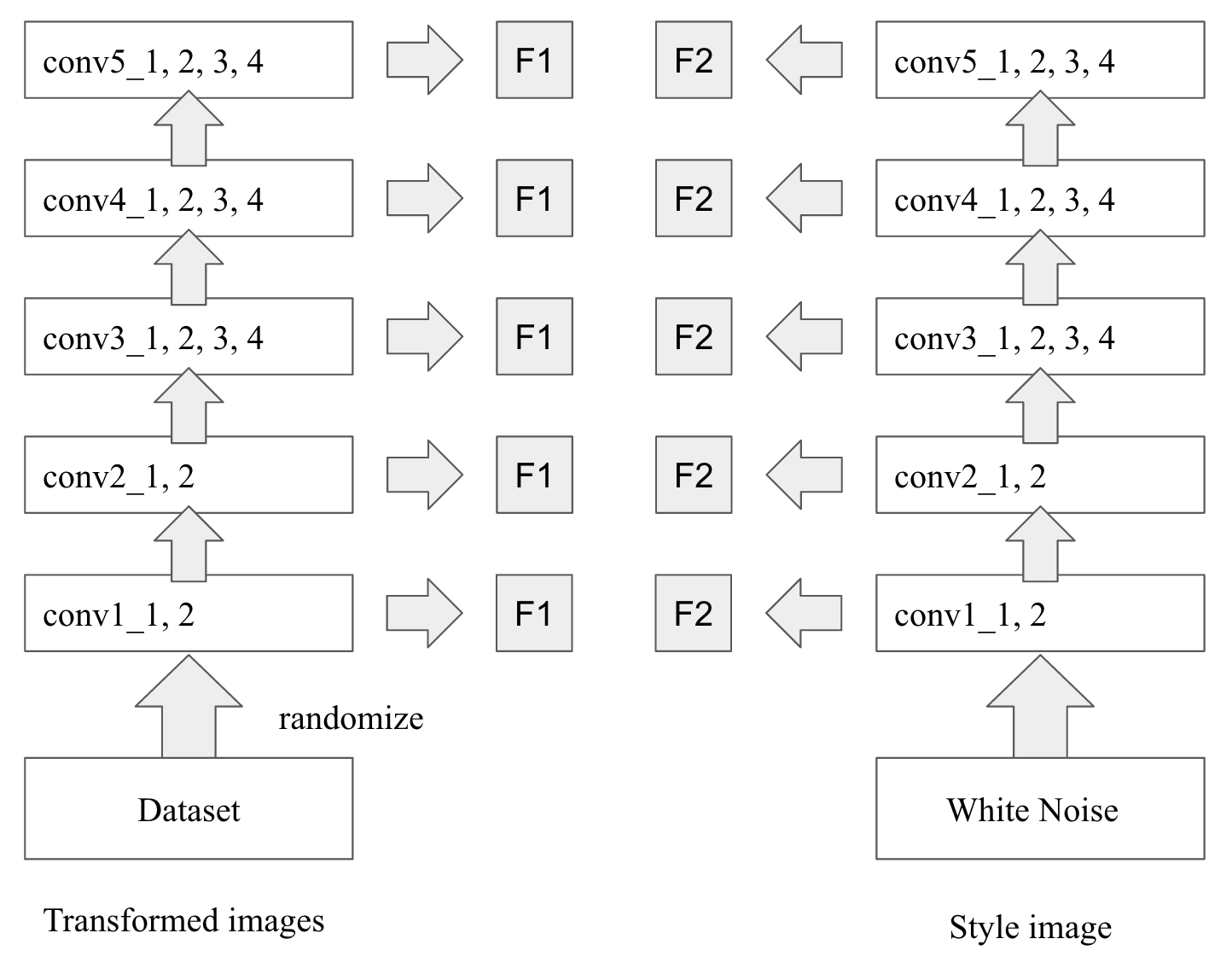

Previous Works

Image style transfer using convolutional neural networks

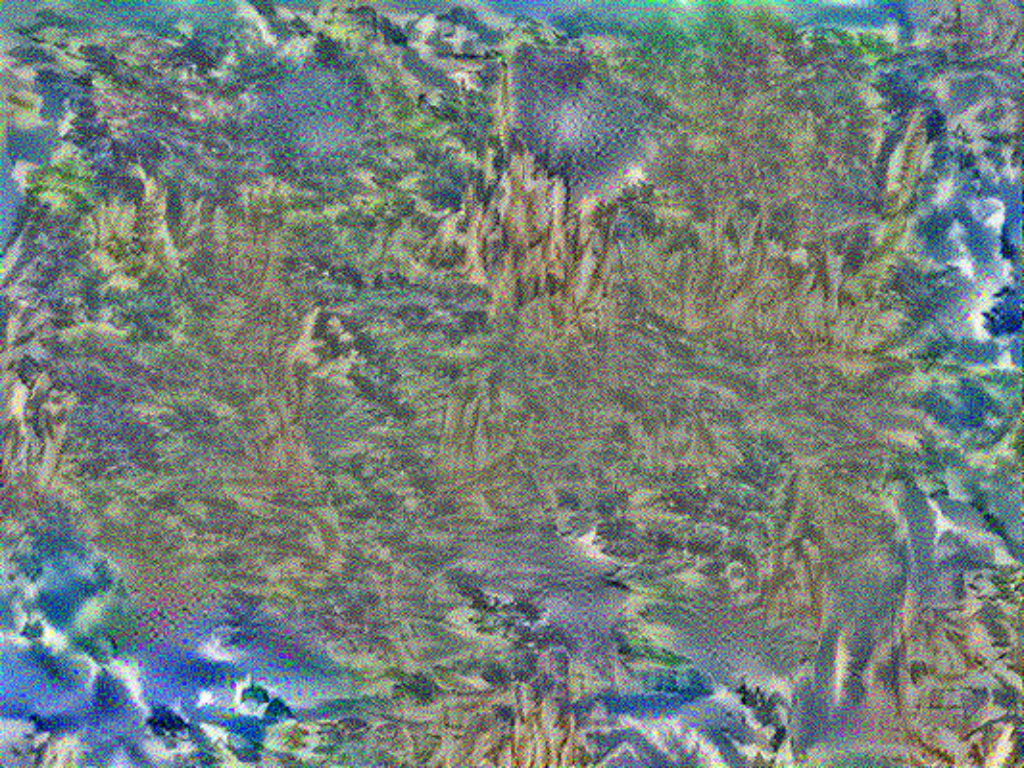

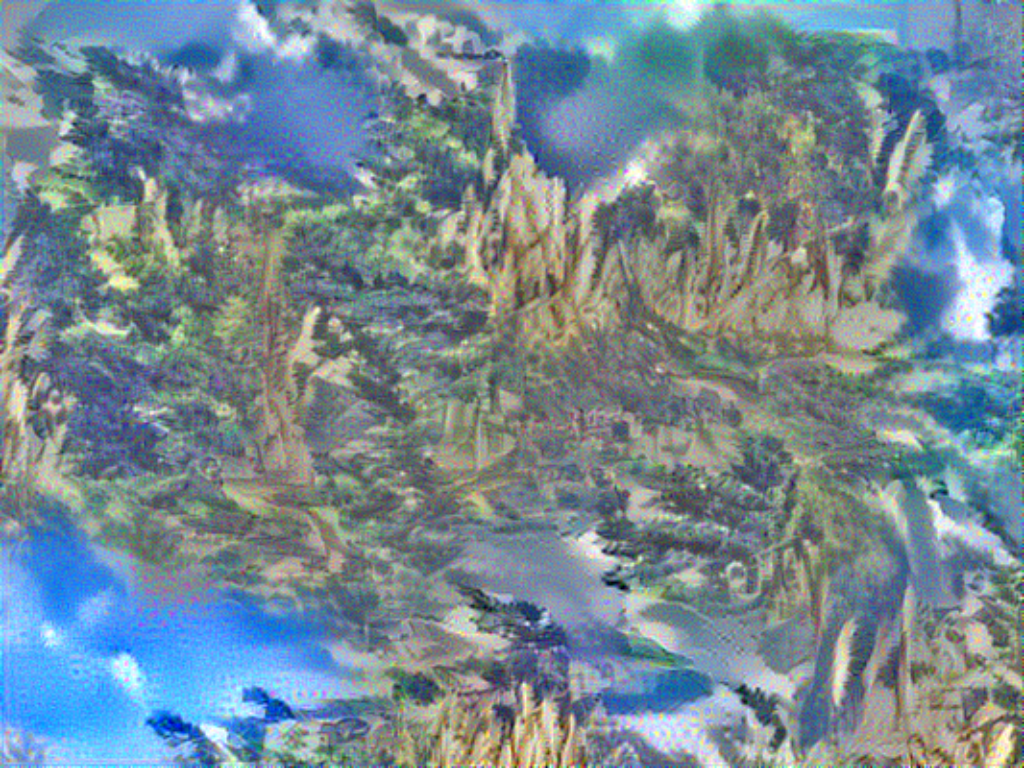

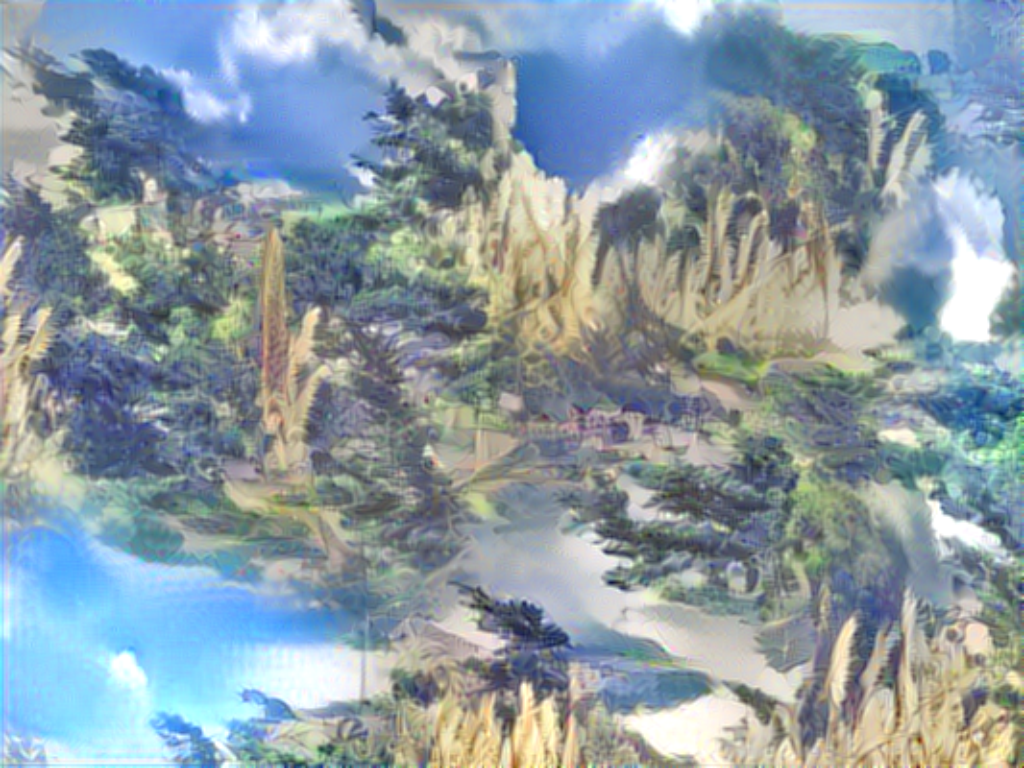

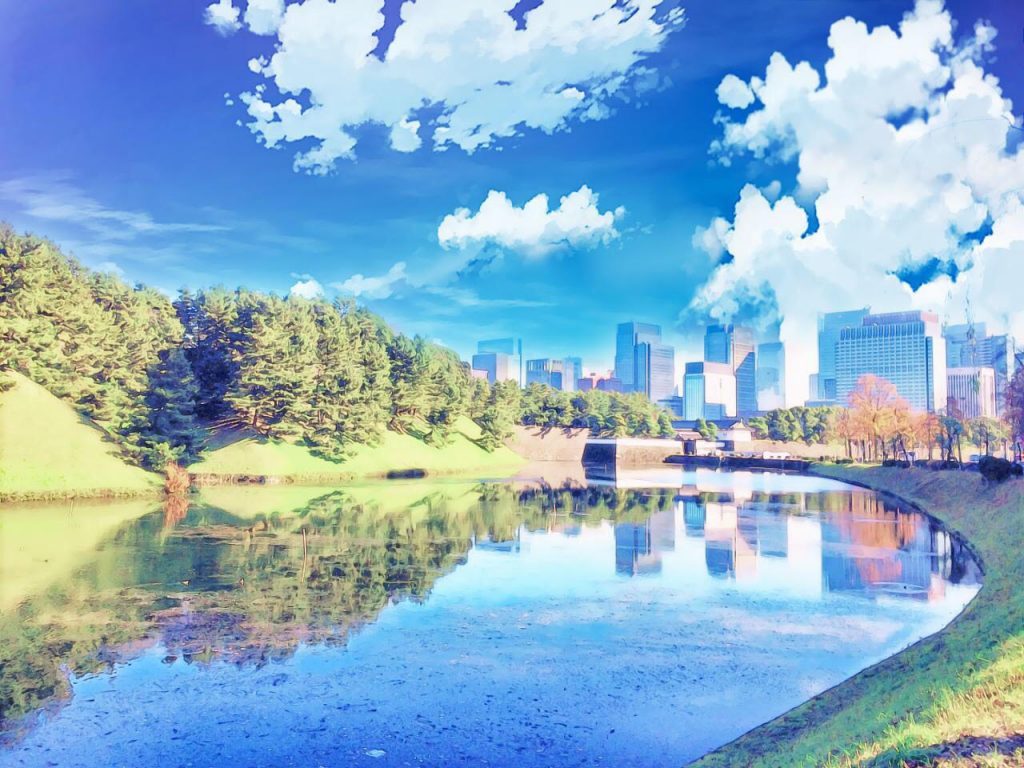

In transferring style problem, the goal is to synthesize a new image that contains both semantic content information from the reference

content image and artistic style information from the reference style image. As demonstrated in Figure 2, an output image

is generated based on a reference content image depicting the Tübinger, Germany, and a reference style image entitled The Shipwreck of the

Minotaur by J.M.W. Turner, 1805. The generated image is able to illustrate the content information similar to the reference

content image of the Tübinge while also render the style information similar to the reference style image The Shipwreck of the

Minotaur.

Figure 2: an example of results produced by a neural algorithm described in Image style transfer using convolutional neural networks.

Left: a content image depicting the Neckarfront in Tübinger, Germany;

Right: a transformed image generated by the algorithm;

Bottom: the painting named The Shipwreck of the Minotaur by J.M.W. Turner, 1805.

This example is taken from Image style transfer using convolutional neural networks.

The definition of style and content of an image is ambiguous. Because we do not have precise definitions of what portions/qualities of an image

contributes to "style" or "content" of an image. These two terms are also vaguely defined, depending on individuals. Before moving forward,

we define a few definitions regarding the similarity between images' style and content.

Suppose $\vec{p}$ represent a content image vector, $\vec{s}$ represent a style image vector, and $\vec{x}$ represent any input

image vector. Consider a convolutional neural network that will be used to represent features of an image. In this paper, the authors

use VGG network with 19 layers, in short VGG-19 network. Suppose layers used to represent a style image is $\mathcal{S}$ and layers used to represent a content image is $\mathcal{C}$.

Definition 1: Let $P^l$ and $X^l$ be features' representations of a content image $\vec{p}$ and an input image $\vec{x}$ on layer $l$

of a trained network. Two images have similar content information if their high-level features obtained by the trained classifier have small

Euclidean distance. In other words, two images have similar style if the following loss function regarding content representation

of two images is small:

$\mathcal{L}_\text{content}(\vec{p},\vec{x}) = \sum\limits_{l\in\mathcal{C}} \left[ \frac{1}{U_l} \sum\limits_{i,j} \left(P_{ij}^{l} - X_{ij}^{l}\right)^2 \right]$

where $X_{ij}^l$ and $P_{ij}^{l}$ are the feature representations on layer $l$ of $i^\text{th}$ filter at position $j$

of an input image and a content image, respectively, as represented by the trained classifier. The term $U_l$ is the total number of

units on layer $l$, used for the purpose of weighting contribution of each layer.

Definition 2: Let $S^l$ and $X^l$ be representations of a style image $\vec{s}$ and an input image $\vec{x}$ on layer $l$

of a trained network. Two images have similar style if the difference between their features' Gram matrices has a small Frobenius norm.

The Gram matrix, $G_\vec{x}^l$, of any image $\vec{x}$ on layer $l$ is defined as follows:

$G_\vec{x}^l = \left[ G_{\vec{x},ij}^l \right]$

where each element of the Gram matrix is given by inner products between feature representations on the trained classifier on

layer $l$ for any two different filters:

$G_{\vec{x},ij}^{l} = \sum\limits_{k}X^{l}_{ik}X_{jk}^l$.

In the same way, two images have similar style if the following loss function regarding style representation of two images is small.

$\mathcal{L}_\text{style}\left(\vec{s},\vec{x}\right) = \sum\limits_{l \in \mathcal{S}} \left[ \frac{1}{U_l} \sum\limits_{i,j}\left( G_{\vec{s},ij}^l - G_{\vec{x},ij}^l \right)^2 \right]$

where $G_{\vec{s},ij}^l$ and $G_{\vec{x},ij}^l$ are elements at $i^\text{th}$ row

and $j^\text{th}$ column of Gram matrices for a style image $\vec{s}$ and an input image $\vec{x}$

based on feature representations in the trained classifier on layer $l$.

Algorithm

In Image style transfer using convolutional neural networks, a deep convolutional neural network (VGG-19) is used as a trained

classifier for obtaining feature representations of any input images, content images, and style images. VGG-19 network is a classifier trained

for solving image recognition task on over 10 millions images. The set of layers used for representing content information is

$\mathcal{C} = \{\texttt{conv4_2}\}$. The set of layers used for representing style information is $\mathcal{S} = \{\texttt{conv2_1}, \texttt{conv3_1}, \texttt{conv4_1}, \texttt{conv5_1}\}$.

More details about the structure of VGG network is available at Very Deep Convolutional Networks for Large-Scale Image Recognition.

The main advantages of using the VGG network, compared to other convolutional neural network, are 1) able to run on a large scale image recognition settings, 2) generalizable with different

datasets, and 3) representable with deep networks. The generalizability over different kind of datsets and its deep network structure make

it possible for each layer to retain photographically accurate information, with different degree of variation. Specifically, the deeper

layer of the network is responsible for global structure of an image, while the shallower layer of the network is responsible for

fine structure of an image, such as strokes or edges. Note that the VGG network is the winner of the

ImageNet Large-Scale Visual Recognition Challenge (ILSVRC-2012).

The dataset ImageNet contains over 10 millions of images with various kind of objects.

Given a reference style image $\vec{s}$ and a reference content image $\vec{p}$, the algorithm starts by putting these two images into the

trained classifier VGG-19 network in order to obtain feature representations on each layer of the VGG-19 network. For style features,

the Gram matrix on each layer $l \in \{\texttt{conv2_1}, \texttt{conv3_1}, \texttt{conv4_1}, \texttt{conv5_1}\}$ is calculated and stored.

For content features, the feature representation on layer $l \in \{\texttt{conv4_2}\}$ is stored. Once we have all feature representations

of reference images, we then try to generate an image $\vec{x}$ that minimizes the following loss function:

$\mathcal{L}_\text{total}(\vec{p},\vec{s},\vec{x}) = \alpha \mathcal{L}_\text{content}(\vec{p},\vec{x}) + \beta \mathcal{L}_\text{style}(\vec{s},\vec{x})$

where $\alpha$ and $\beta$ are weighting factors for content and style reconstruction, respectively. The learning algorithm will then optimize $\vec{x}$ such

that it minimizes $\mathcal{L}_\text{total}(\vec{p},\vec{s},\vec{x})$ using gradient descent algorithm, such as L-BFGS. In the process of optimizing loss function, we start with a randomized white noise. This randomized white noise is put into a trained

classifier VGG-19 network to obtain feature representations in each layer of the network. The Gram matrix is also calculated accordingly

in the same manner. Next, the loss function is calculated. Since the loss function is differentiable, we then follow the gradient descent

algorithm to optimize an input image $\vec{x}$ that optimizes the total loss function $\mathcal{L}_\text{total}(\vec{p},\vec{s},\vec{x})$.