CS181AA Projects

(Spring 2024)

This course is about software project skills other than programming

(e.g. concept development, requirements, architecture and design, reviews,

testing, and management). You will be asked to form teams, and come

up with a single (large) project concept.

Then, over the course of the semester, you will work on different

aspects of that one project:

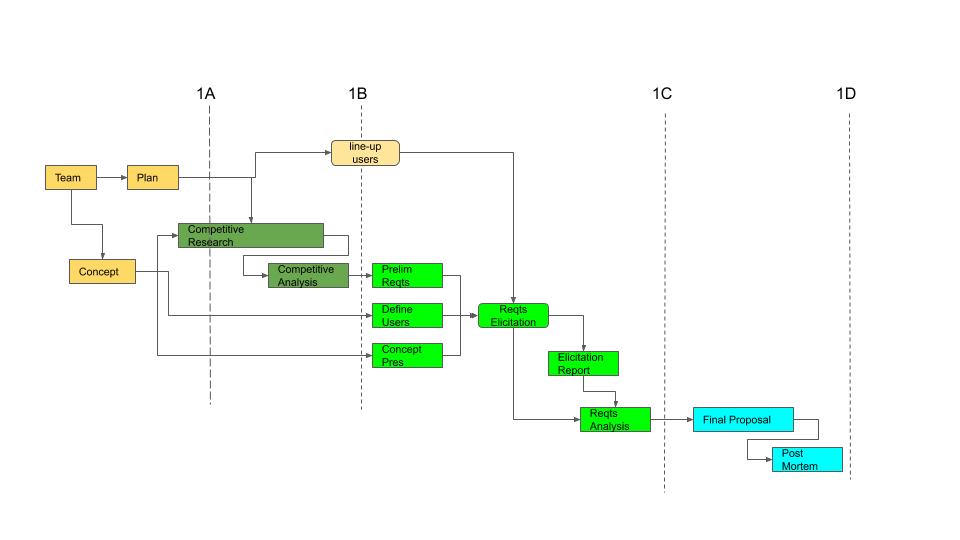

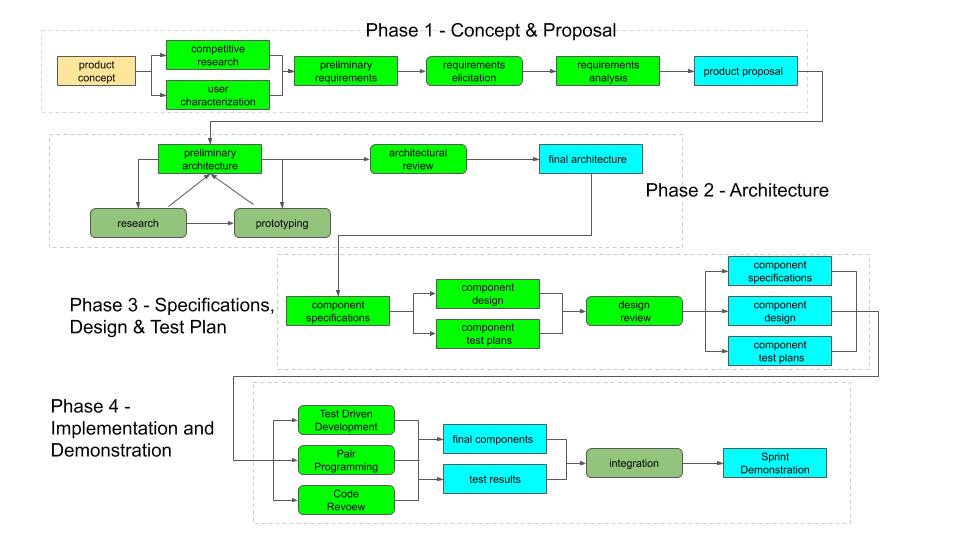

| Due Date1 | Assignment2 | Summary of Activities |

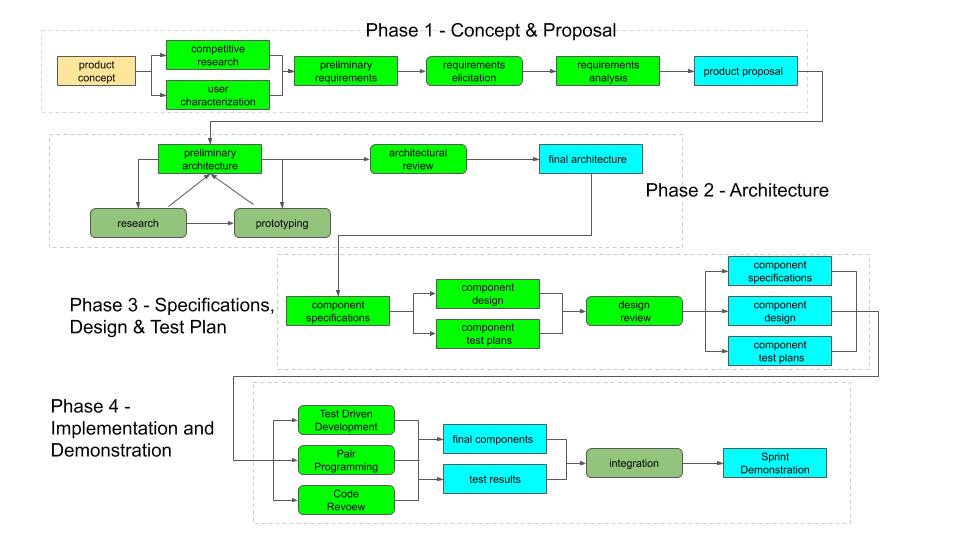

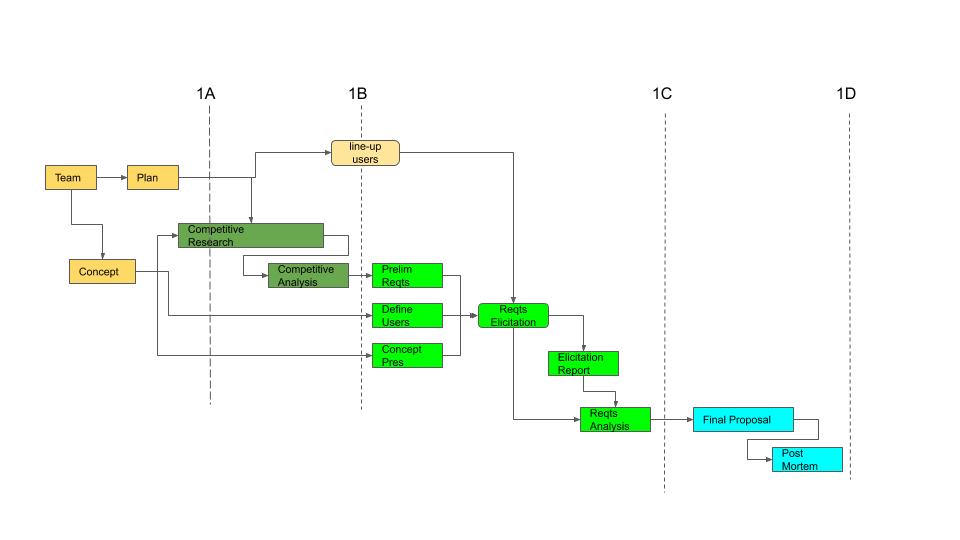

| Phase 1: Concept Development, Requirements and Proposal |

| Sun 1/21 | 1a. Team, Concept, Plan |

Form teams, identify a preliminary concept, write plan for turning it into a proposal. |

| Sun 1/28 | 1b. Competitive Research |

Research existing products in this space, position your proposal against this field,

and develop a competitive value proposition. |

| Sun 2/4 | 1c. Requirements Development |

1. Create a concept presentation to introduce people to the type of product you are exploring

2. Identify and characterize potential users

3. conduct interviews to gather requirements

4. analyze and report on the results. |

| Sun 2/11 | 1d. Final Proposal |

1. Combine all of the above into a complete project/product proposal

(what will be built, why you will be successful)

suitable to be summitted for funding/approval.

2. Review the processes you followed in this project to see what lessons you can learn

for how to do things more effectively in the future.

|

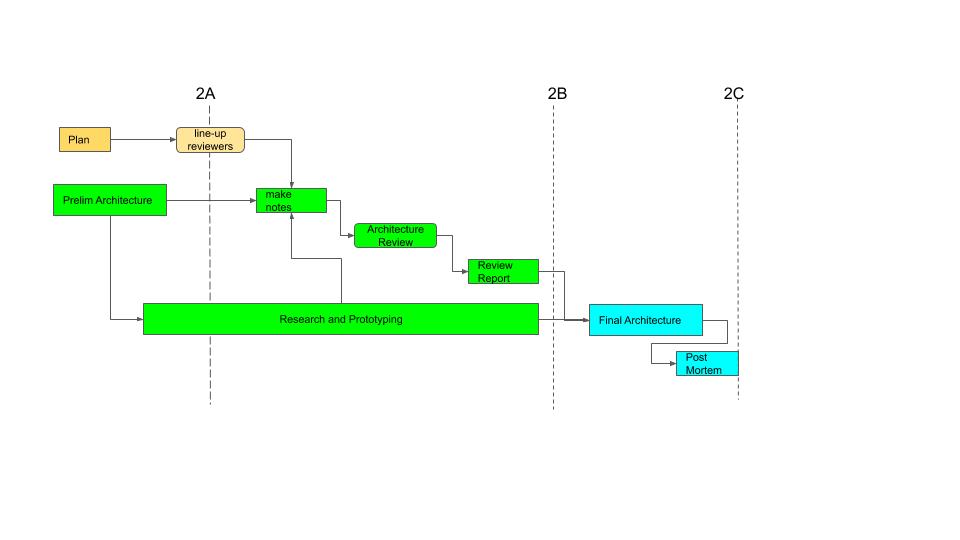

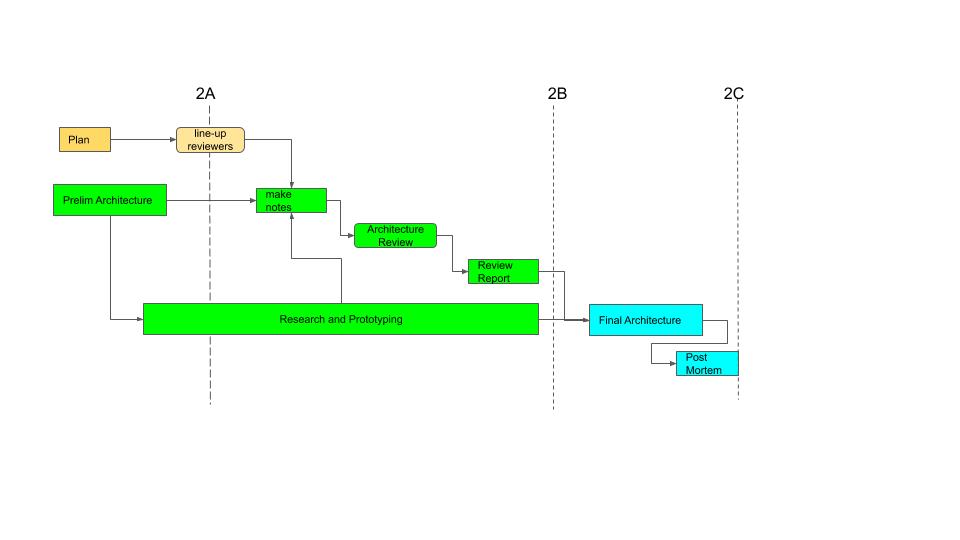

| Phase 2: Architecture and Review |

| Sun 2/18 | 2a. Plan and

Prelimnary Architecture |

1. Develop a plan for this project, dividing the work over

your team members and the available time.

2. Develop and document an architecture and high-level component specifications

for your project. This includes doing any required research and prototyping to

address critical questions.

|

| Sun 2/25 | 2b. Architecture Review |

1. Study another team's architecture, and prepare notes for a design review,

as they will study and pepare for a review of yours.

2. Conduct a design review with that other team, as they will with you.

3. Write up a report of the review.

4. Continue to work any issues with the team that raised them.

|

| Sun 3/03 | 2c. Final Architecture |

1. Revise your preliminary architecture based on the results of your review and investigations,

and submit a report on the identified issues and their resolutions.

2. Prepare and submit a final architectural proposal.

3. Review the processes you followed in this project to see what lessons you can learn

for how to do things more effectively in the future.

|

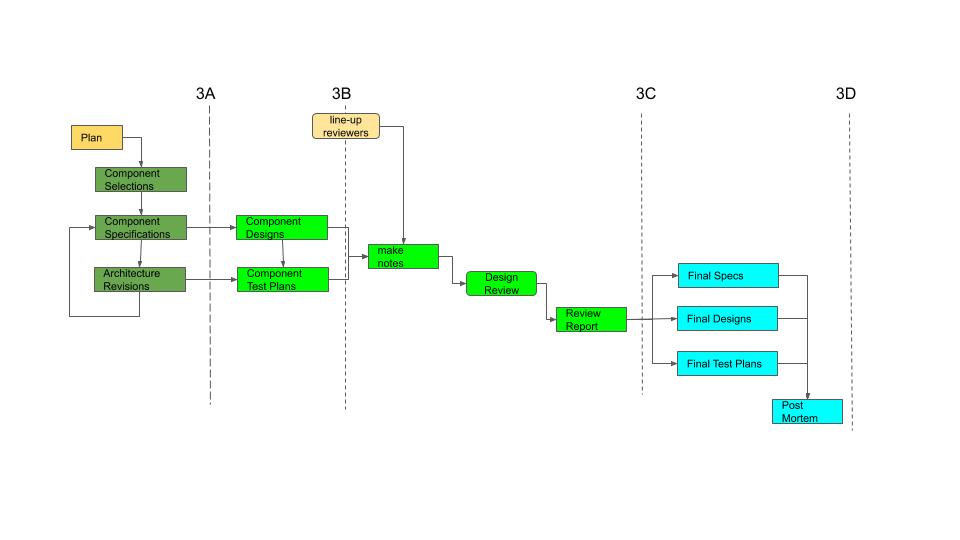

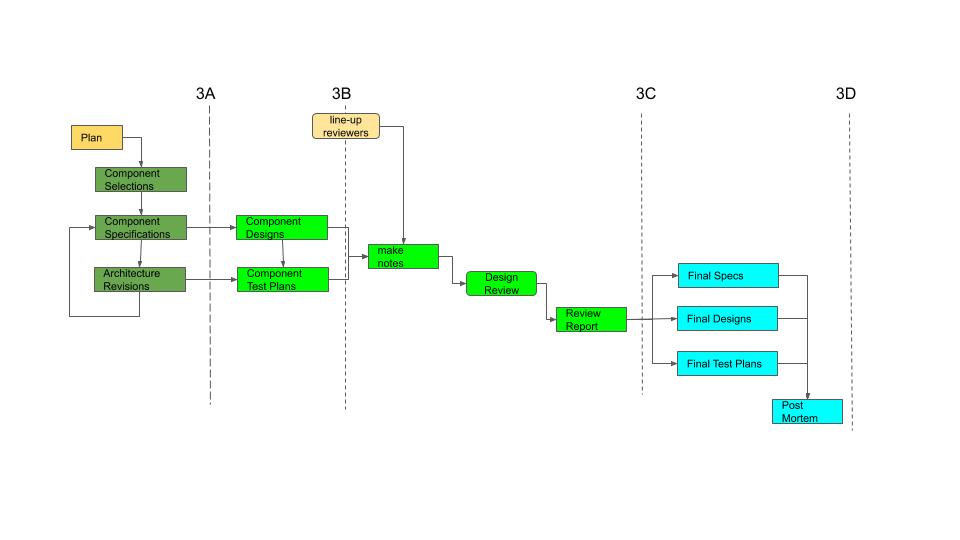

| Phase 3: Component Specifications, Design and Test Plan |

| Sun 3/10 | 3a. Component Selection and Specifications |

1. Select the system components to be designed and implmented

2. Select an owner for each component

3. Develop a schedule and assign responsibilities for the remaing phases

4. As a team, elaborate the architecture above the chosen pieces to

develop detailed specfiications for each.

5. As individuals, write up detailed specifications for your assigned component.

|

| Component Design and Test Plan" | 3b. Component Design and

Test Plan |

1. As individuals, prepare a detailed design for your assigned component.

2. As individuals, prepare detailed unit-testing plans for your assigned component.

|

| Sun 3/31 | 3c. Design Review notes,

meeting and report. |

1. As individuals, package your specifications, design and test plans for review.

2. As individuals, read each package and prepare notes.

3. As a group, review each package, and write a report for each review.

|

| Sun 4/07 | 3d.

Final Specification, Design, and Test Plan |

1. As individuals, revise your specifications, designs, and test plans

to address all important issues raised in the review.

2. As a team, review the processes you have followed, and write up a

post-mortem report.

|

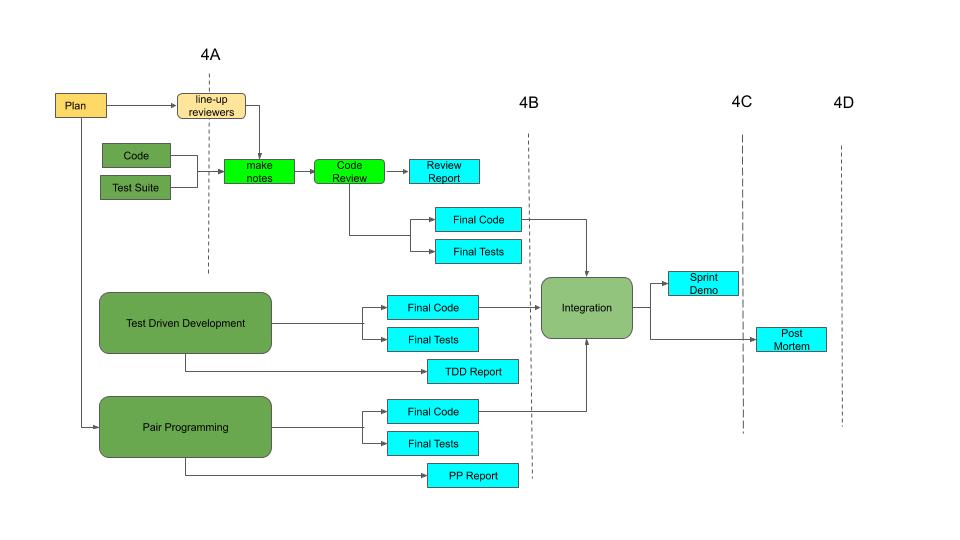

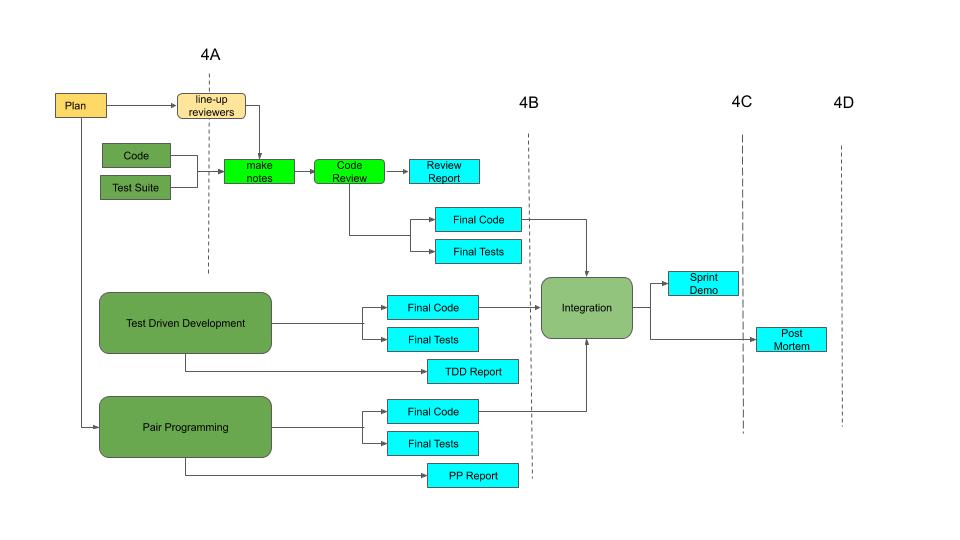

| Phase 4: Implementation and Testing Sprint |

| Fri 4/26 | 4. Final Reports |

1. Each of you will

implement the component

you designed in phase 3.

2. Two of you will spend (at least) one session doing

pair-programming

and write up a brief report on the experience.

3. (at least) one of you will develop your code in a

Test Driven Development

fashion (implementing and running tests as you complete each routine or feature)

and write up a brief report on your experience.

4. (at least) one of you will implement your component and then submit it to

code review

by other members of your team and produce a report from that review.

5. Each of you will implement the

component test plan

you designed (in phase 3)

and use it to validate the correctness of your implementation.

6. As a team, design a

demo

that shows your (independently implemented) components working together.

7. As a team, prepare and present a brief sprint review and demo.

8. As a team, review the processes you have followed, and write up a

post-mortem report.

|

1 Submissions are (modulo late-day-use) due by midnight at the end of the due date.

2 The same

general submission process

is used for all project deliverables.

Each deliverable (including how it will be graded) is

described in the associated

project descriptions.

Your submissions should not assume that your readers have

access to any special or proprietary software.

The best submission formats are probably:

- ASCII text for simple prose

- pdf or Google Docs URLs for formatted reports w/figures

- csv or Google Sheets URLs for spread sheets

We will in many cases be looking at how plans or specifications have evolved.

This can be captured with Github commits,

or by enabling history in

Google Docs.

For anything you submit as a URL (e.g. Google Docs or Github repos),

please ensure that they are publicly accessible to anyone with the URL.

Work-products will be considered to have been submitted at the time of the last

update or commit and push.

General comments on project rules and grading can be

found at the end of the project descriptions.

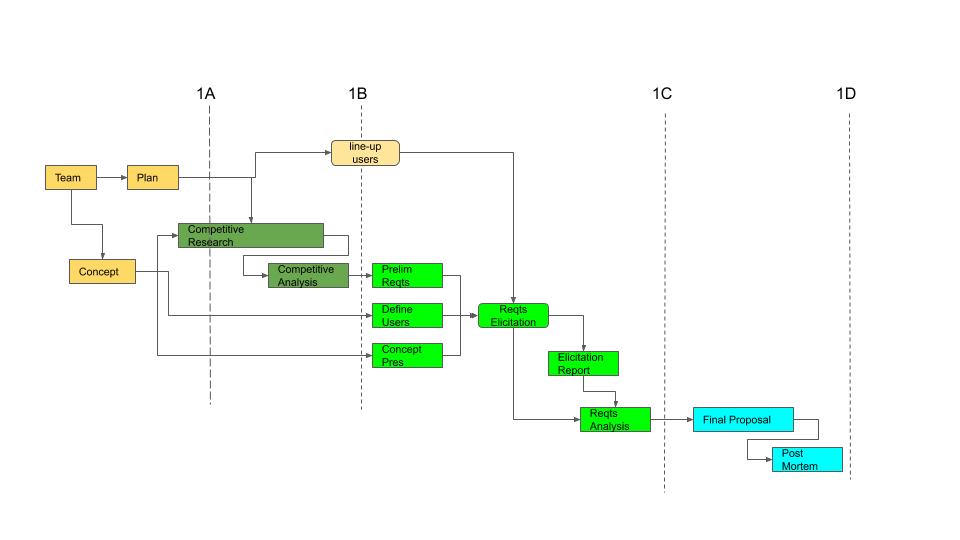

Project Phase 1

Concept Development, Requirements and Proposal

Introduction

What is the difference between a concept and a proposal?

- A concept is something that can be discussed.

- A proposal is something that can be acted on.

Before a grant is given or a new project is funded, a clear

proposal has to be developed for the work to be done, why

it is worth doing, and why the proposed effort is likely to

be successful. There are many steps on the path from

concept to proposal.

- developing an understanding of the problem to be solved

- developing a description of the work to be done

- verifying the feasibility and likely value of the proposed work

- developing a plan for the (above) investigation to be performed

The primary goal of this project is to give you real experience with the

development of a concept, the gathering, organizing and prioritizing of

requirements, and the development of a proposal for a software product.

A secondary goal is to develop your abilities to intelligently

plan a group endeavor, effectively manage it, and critically evaluate

the completed effort.

You are to form into small (idealy 4 person) teams and come up

with a concept for a new or improved software product.

You will spend the next few weeks developing that concept into

a proposal.

There are multiple phases to this project, each of which has its

own goals, processes, and deliverables:

3 less 10% for each unexcused late day.

4 If you submit these early enough, we will try to turn them around

quickly enough to enable you to improve your final proposal based on the

feedback from these earlier assignments.

P1A - Concept and Plan

Every project begins with:

- a concept for something to do

- a plan for exploring the concept

A concept document is a very brief description of what you propose to

do and a justification for why it is worth doing.

The living document that lays out the plan (du-jour), its progress, and its

evolution over the course of the project is the Management Plan.

P1A.1 Concept

Write up a brief (e.g. no more than one page) description of your idea, including justifications

for why you believe it to be:

- valuable as a product

- something that could actually be built and used

- something that you would (given sufficient time) be capable of designing and building

- fun for you to work on

In order to exercise a full range of software engineering skills

(e.g. competitive analysis, requirements, research and prototyping,

architecture, etc), the project you propose must be a fairly large one.

Many acceptable proposals are too large to be implemented by four people

in one semester. Fortunately you will only be required to implement

a few pieces of the whole product.

In choosing your project, make sure that it is one that will enable you

to meet all of the future project requirements. Your project must ...

- be a software development project (designing and implementing

a moderately large amount of code). A research project, user interface,

web site or extending existing code will not do.

- be in a space where there is some existing software that you

can research, and against which you can position your proposal.

- have potential users from whom you can gather (a few pages of)

functional requirements.

- require some architecture ... typically this means four or more

distinct functional components (other than the platform on which they execute)

for which you can specify functionality, interfaces, and the means in which

they interact to provide the desired service.

Whether these components interact via calls, client-server protocols,

or through shared data is entirely up to you.

It is acceptable if one or two of these components already exist

(e.g. in standard toolkits or services) ... but you must design the

architecture.

Creating a new plug-in for an existing architecture is unlikely to meet this

requirement

- have multiple (e.g. at least one per person) moderately large

and complex (e.g. a few hundred lines of non-simple code) components

that you are capable of building, testing, and combining to yield

demonstratable product functionality.

To enable me to assess how well the proposed project will fulfill these requirements,

you should briefly (e.g. 1-3 sentences per component) describe each of the

major run-time components that you expect to implement (or use).

Your initial concept will be graded on the basis of:

- 40% clarity and suitability - I understand what you propose to build

(i.e. what run-time components you will implement, and what functionality

it will provide for what types of people).

- 20% value proposition - I understand why I (or someone else) would find it valuable.

- 20% practicality as a product - I believe it could be successfully built and delivered.

- 20% practicality for this project - I believe you can gather requirements, specify,

design and build it (given sufficient time).

P1A.2 Management Plan

Most of the individual sub-tasks associated with this project can be done

in a couple of hours by one or two people. But there are a great many of these

tasks to be performed, and (you will discover) not a great deal of time in which

to get them done. The only way you will succeed is if you have a plan (who is

going to do what, when, and then who will do what with it) for the entire project

(from initial concept through final proposal and Post Mortem report).

Each team will prepare a task-breakdown, identify the dependency relationships between

tasks, and owners for each sub-task, assign due-dates, and schedule regular reviews

of both work-products and progress (to enable adequate time to deal with the

problems that will happen).

A good plan will ensure that all work is done before the

due date, but allow people a reasonable amount of slack

in when they have to do each particular task.

A plan to defer everything to the last minute is likely

to fail when last-minute problems are discovered.

An aggressive plan that people won't be able to

achieve guarantees a fire-drill in a few weeks

when it finally becomes clear that it cannot be followed.

Experience has shown that vague plans are easier to create

up-front, but create problems down-stream (when people

turn out to have been unclear about exactly what they were

supposed to do, by when, and in what form they were to

deliver their output). Similarly if you do not specifically

map out the dependency relationships among the tasks, you

may find that your schedule is unrealistic, because it

does not allow sufficient time for sequential tasks.

More complex deliverables (like the concept presentation and

final proposal) should be broken into sub-tasks. The most

obvious sub-task is to prepare and review a table of contents

before actually writing the content.

If any deliverable appears to be non-trivial, discuss it in

a team meeting and identify (more straight forward) sub-tasks

that it can be broken into.

The keys to ensuring problems are detected (while there is still time to fix them)

are regular communication, and continuous status tracking

(of actual progress vs the plan).

A good management plan will include regular (e.g. daily) status checks:

- status updates, new realizations, and other discussion topics should

be recorded (e.g. text minutes and/or a collection of white-board

snapshots in Google Docs).

- As problems are recognized and you respond to to unanticipated events,

you will have to revise your plan to deal with them.

Your plan too should also be maintained a a file

(e.g. plan_1.txt in your repo or a Google Doc with history).

- If deadlines are missed, or deliverables fail to pass review,

the fact, as well as the causes and the plan to remedy them must

also be documented.

You can prepare and submit this plan in any form you like

(e.g. perhaps a Google Sheet), but the sort of information I had in mind was:

| Task/Deliverable |

Due Date |

Owner |

1st Draft |

Review Due |

Final Version |

Dependencies |

Risks |

Comments |

| concept | 9/9 |

Algernon |

9/6 | 9/6 | 9/7 |

none | it will be too hard to spec/design |

discuss implementability in our review |

| management plan | 9/9 | Zebulon | 9/7 | 9/7 | 9/8 | careful reading of assignment | leave something out |

multiple people read assignment, review against deliverables and grading standards |

| list of competing products | n/a |

Xenophon |

9/9 | 9/10 | 9/11 |

concept | miss important competitors |

review list before continuing |

| competitive research | 9/16 |

Xenophon |

9/12 | 9/14 | 9/15 |

list | miss important features |

first review is outline of key features |

| ... |

MEETINGS AND PROGRESS MONITORING:

We will have a face-to-face meeting every Tuesday after class to review

our plan for the week.

We will have a 5 minute chat each day at 8PM for a quick status check.

Other working meetings will be scheduled as needed.

If something goes wrong, send-email to the rest of the team THAT DAY.

Zebulon will ensure that all of these are added to minutes.txt

on a daily basis.

Your initial Management Plan will be graded on the basis of:

- 40% good use of time and resources (work apportioned reasonably over the available time)

- 20% specificity of plan (clear responsibilities: who, what, when)

- 20% provisions for early detection of problems, and time to deal with them

- 20% completeness (with respect to tasks and activities)

Maintain your concept description with history (e.g. ASCII text on Github or in Google Docs).

Depending on format, you might prefer to keep your plan in Google Docs, but documenting the changes.

You will probably be updating them daily, and we will be reviewing this history.

When you are ready to submit them for grading:

- prepare your proposal (ASCII text, or an ASCII text

standard submission prologue

that refers to it) in a file named concept_1a.txt)

- prepare your management plan (e.g. management_1a.txt)

- up-load them for submission (only one person on the team needs to do this)

P1B - Competitive Research and Positioning

Before building a new software product, one should first study the

products that are already available:

- to understand the features they already provide.

- to understand how those features do and do not address users' needs.

- to learn lessons from the things they have done well.

- to learn lessons from the things they have done poorly.

- to understand what value could be added by a new program.

Do research to identify existing products in your space

(or the closest existing products to a new space),

their capabilities, strengths, and weaknesses.

The existing products might be commercial products,

private projects, or open source efforts.

Identify multiple ways in which you could

significantly improve on these products.

(if you cannot significantly improve on existing products,

then there is no need for a new one).

This research is not merely perfunctory:

- if we are to create new value in a space we must understand

the functionality that is already available.

- if we are to be successful, we must learn why and how

those who have gone before us succeeded (and failed).

Start with a brief overview of your product-space, the way(s)

in which you seached for comparable offerings, and your rationale

for choosing the ones you choose to study more deeply.

Write up a brief description of the existing products,

how they arose, how they are used,

and notes on their key strengths and weaknesses.

Note the (positive or negative) lessons you can learn

(about either features or implementation approaches)

from each of these products.

Write up a list of feasible and valuable improvements that you

could reasonably make in a new product, and briefly justify

their value and practicality (e.g. why/how you should be able to do better).

Note that this is a list of ideas, and will be graded on

organization, quality of analysis, and clarity of insight.

It will not be graded as prose (e.g. complete sentences).

Your research and analysis will be graded on the basis of:

- 20% how well you found and described competing, similar

or related products.

- 40% the depth, clarity and credibility with which you

understood and described their capabilities, and called out

ways in which they were successful or unsuccessful.

- 20% the depth and cogency of your comparison of their features

with the feature set that you propose.

- 20% the value you gained from this research (features, approaches

and ways in which your proposal could be improved).

Maintain your research report with history (e.g. on Github or in Google Docs).

When you are ready to submit it for grading:

- prepare your report (ASCII text, or an ASCII text

standard submission prologue

that refers to it) in a file named report_1b.txt)

- up-load it for submission (only one person on the team needs to do this)

P1C - Requirmenents Development

Clarity is power.

Many well-funded projects have failed because they did not deliver functionality that people

actually needed (or did not deliver it in a usable form).

Our success in building a new software product is a function of

how clearly we understand its intended functionality.

Its success in satisfying its users is a function of how well

we understand their needs.

If we do not clearly understand what must be done,

it will only be by accident that we do the right things.

Requirements Development is the (often ignored by developers)

process of developing those critical understandings.

In this phase of the project you will:

- develop your own proposed list of product requirements.

- identify and characterize the types of users for which your product is intended.

- conduct requirements elicitation interviews with representative users from each major class.

- analyze the results of those interviews and develop a set of formal requirements for your project.

P1C.1 - Preliimnary Requirements

Brainstorm on your product concept and discuss the capabilities of

existing products to get ideas for what your product should

be able to do.

Identify different types of users (who might have different abilities or needs)

and then identify capabilities, characteristics, and/or use cases for each.

The specific form in which you choose to represent these

requirements is up to you. Basic capabilities may be best

captured by simple declarative sentences. Complex or role

based interactions may be best captured by use cases. Use

the forms that you think best capture the requirements in question.

Again, there is no need for this document to be any more

than organized notes.

Your preliminary requrements document should include:

- the various types of people who might want to use your product.

- the goals that each user would have for the product.

- the needs (features they require in order for the product to be useful),

expectations (features that will make the product more attractive to them),

and abilities (e.g. skills and domain sophistication) different types of users would bring.

- the types of users to which you feel best able to respond

- the requirements you think your product would need to meet to satisfy those users

This is only a brain-stormed initial list. You will gather considerably more input

from potential users in your requirements elicitation.

As such, it probably doesn't make sense to try to go into too much detail

or prioritize these preliminary requirements.

These preliminary requirements will be graded on the basis of:

- 20% how well you understood different types of possible users

- 40% how well you anticipated their likely abilities and expectations

- 40% how well and clearly you were able to capture these needs in requirements

Maintain your requirements with history (e.g. on Github or in Google Docs).

When you are ready to submit it for grading:

- prepare your report (ASCII text, or an ASCII text

standard submission prologue

that refers to it) in a file named reqts_1c.txt)

- up-load it for submission (only one person on the team needs to do this)

P1C.2 Concept Presentation

Before asking potential users for requirements suggestions, we have to give

them some idea of what we are talking about. This should be a brief (five

minutes or less) presentation on the type of product being considered.

This is not a sales pitch!

As we will discuss in our lecture on requirements,

it is crucial that we not contaminate the panel with our own thoughts. The purpose

of a requirements elicitation is for us to get information from potential users.

As such, the presentation should be limited to establishing a context for the discussions to follow.

A brief (3-6 minutes) prepared presentation (including slides and/or other visual aids)

introducing the product concept as background for the requirements elicitation.

This presentation will be graded on:

- 40% its effectiveness in establishing context (the interviewees

understand the form, purpose, and use of the intended product).

- 10% how effectively it stimulates interest in the product, making them

eager to provide input.

- 10% its length: 3-6 minutes

- 10% the extent to which it does not otherwise contaminate or prejudice the

panel members.

- 20% logical/information presentation structure.

- 10% how good an impression it makes (polished and professional).

This presentation will be given at the

start of your requirements elicitation session, but

any prepared materials (e.g. slides) must be prepared

and made available for review prior to the actual elicitation.

You may prepare and deliver this presentation in any

form you choose, but the written submission should be

in some relatively universal format (e.g. pdf, HTML,

Google Presentation).

Maintain your concept presentation on github (or in a Google Presentation with history).

When you are ready to submit it for grading:

- create a standard submission prologue

(that includes a URL to the source or viewable slides/html) entitled

entitle it concept_1c.txt

- up-load it for submission (only one person on the team needs to do this)

P1C.3 - Requirements Elicitation

As described in the Requirements lecture, there are many possible sources

of product requirements. One of the most important sources is the intended

users. The better we understand what they do, and how the proposed product

would be used, the better we can design a product to meet their needs.

Each team will be asked to plan and conduct a session in which they will

gather requirements information from potential users.

This is a ~30 minute face-to-face meeting with potential users where

you will gather information to develop and validate requirements.

-

Briefly (in less than 60 seconds) introduce yourselves and the agenda

-

Give your concept presentation

-

Start with open-ended information gathering about the relevant activities,

how they pursue them, what problems they encounter, and what they wish they could do.

-

Then move into more specific questions, following up on interesting points

raised in the open-ended questions, and posing the more directly product-focused

questions that you have come up with.

-

Get their comments on information you have gained from other sources.

Note: It is possible that, in the process of giving you

their own requirements, the customers will actually

touch on all of your own previously gathered requirents.

If this is the case, when you get to that part of the

agenda, specifically affirm that they have reiterated

(or how they have changed) that prior input.

-

Present a summary of the key messages you have gotten from them today,

and give them the opportunity to correct/amend those.

-

Thank them for their participation.

Team roles:

- Moderator: Leads the meeting, discussions, etc.

- Scribe: Takes notes, and present a summary of what has been heard at the end.

- Others:

All team members will play important parts in making this a success.

The moderator and scribe may be too busy to actually

think about and process what is being said in real time.

Other team members should

-

Distill long discussions into punch-lines for the summary.

-

Keep track of points that need further clarification before we are done.

-

Check off comments that relate to previously suggested requirements,

and maintain a list of requirements that still require validation.

- Watch the agenda and the clock,

and help the moderator keep to the agenda and

make sure that nothing is missed (because it is difficult to think while talking).

This process will be graded on the basis of:

- 25% the extent to which you are able to adhere to the process (which will be described,

in the reading, given to you in writing, and demonstrated in class).

- 50% the extent to which you are able to obtain, clarify, and digest useful information

from the panel about their activities, needs and pior experiences, and perceptions

in this area.

- 25% the extent to which you are able to confirm the correctness of your

understandings and the information gained, and validate/clarify prior

assumptions and questions about requirements.

When you have identified a panel of potential users, schedule an appointment

(with me or a grader) for your requirements elicitation session.

P1C.4 - Elicitation Report and Requrements Analysis

After the elicitation session, scribe should write up

a summary report, review it with the team, and add it to your repo.

This report should include:

- When and where the session took place and who attended.

- Interesting information gained during the open-ended

information gathering.

- Clear statements of significant new requirements that

were suggested, along with an assessment of how important

each is and why.

- Significant input on previously gathered requirements,

affirming, refuting, or changing them.

- Any other useful results gained from the meeting.

This report is a record/summary of what you learned in the elicitation.

It can certainly call out new things, and organize the input into

clear messages. But this report should be treated as "raw data",

and it is probably best not to add opinions (agreement or disagreement

with what they said) to this record. Those can be explored in the

(subsequent) requirements analysis.

This report will be graded on the basis of:

- 20% form (capturing who, when where, topic)

- 20% completeness (all key points captured)

- 20% the clarity, organization and readability of the report

- 20% understanding what the customer said (correctly, and deeply)

- 20% a summary that captures and organizes the key input

When you are ready to submit your elicitation report for grading:

- prepare your report (e.g. ASCII text in a file named report_1c.txt)

- put a standard submission prologue on the front of it

- up-load it for submission (only one person on the team needs to do this)

The elicitation report is raw input to the requirements development process.

The characteristics of good requirements are discussed in both

the reading and the lecture on requirements. Starting with

your initial (brain-stormed) requirements:

- revise them based on customer feedback (and your

evolving understanding of the problem)

- add the new requirements gathered from customers.

- look for ambiguous requirements, and clarify them.

- assign a value (e.g. high/medium/low) to each requirement,

and justify that assignment.

- assign a confidence (e.g. high/medium/low) to each requirement

(that you have properly understood it and/or its value).

- look for conflicting requirements, and resolve the

conflicts.

- assign a difficulty (easy, moderate, hard) to each,

and (briefly) justify this assignment.

- assign a priority (e.g. must-have, should-have, nice-to-have)

(based on the combination of value and difficulty)

to each requirement, and then ladder them by priority.

- suggest a cut-off line for release 1.0, and justify

why that is the right place to draw the line.

When you sit down to come up with a final set of requirements,

it is likely that you will discover that some of the input

you got in your elicitation was not as clear as it seemed at

the time. When this happens, you have a few options:

- If it is unclear, go back to the person from whom you got the requirement

and seek clarification.

- If there are contradictions, understand why different people have

different needs, and see if there is a way to satisfy both (or give

up on one set of users).

Based on the results of your requirements analysis, prepare a priority

ordered list of product requirements. For each, list:

- the stated requirement

- your confidence in that requirement, and where that confidence came from

- its priority, and your justification for that determination

(e.g. your confideince that it is needed by many users,

and why you believe that priority is the correct one).

- its difficulty (to implement), and your basis for that assessment

The requirements analysis will be graded on the basis of:

- 40% completeness of the lists and traceability of the requirements

(e.g. to user input or existing standards).

- 20% reasonablness/appropriateness of the requirements

- 20% prioritization and rationale for that prioritization

- 20% clarity, organization and readability of the report

Maintain your requirements analysis with history (e.g. on Github or in Google Docs).

When you are ready to submit it for grading:

- prepare your report (ideally ASCII text in a file named analysis_1c.txt)

- put a standard submission prologue on the front of it

- up-load it for submission (only one person on the team needs to do this)

P1D - Final Proposal and Post Mortem

Having thought about what you want to build, describing it to others,

and getting their feedback, you should now be prepared to:

- write up a proper proposal for your project.

- assess how this process went, and what you have learned from it.

P1D.1 - Final Proposal

The key deliverable is a written proposal (likely to management or a granting agency)

about what this project is and why they should approve (or fund) it:

- It should include an overview of the problem space and concept.

- It should describe the key types of users and the needs to which your proposal responds.

- It should include competitive positioning information.

- It should include validation (from your customer interviews or other research)

that your proposal responds to a real need and would be a compelling product.

- It should include a list of key requirements the product must satisfy

(and why these requirements can be trusted).

- It should include some basis for believing this project to be feasible

for implementation (given enough time) by this team.

Most of this information has already been assembled (in your initial proposal,

competitive analysis, concept presentation and requirements analysis).

Now you are assembling it into a final form.

Hints (about the real world):

The director to whom you are submitting this proposal can be

viewed as another type of user, with her own sets of needs.

Your product need not respond to your director's needs ...

but your proposal (if it is to be successful) must do so!

This means you need some idea of who your director is, what

constraints she is under, and what problems she is trying to solve.

Note that executives (even R&D directors) are very busy, and

often suffer from short attention spans ... so brief and to-the-point

is generally better than long and elaborate.

This proposal will be graded much more on content than on format, so ASCII

text is completely acceptable. If you want to submit it in a

richer format (e.g. so you can include images), PDF, Googledocs,

or HTML are also acceptable.

This proposal will be graded on the basis of:

- 20% clarity problem domain and cogency of the concept

- 20% clarity and depth of the target user descriptions

- 10% clarity, depth, authoritativeness, cogency of the competitive analysis

- 20% clarity and persuasiveness of the value proposition

- 10% clarity, reasoanbleness, prioritization, provenance of the key requirements

- 10% likelihood that I would fund this effort

(because I believe it can be built and will address a real need)

- 10% likelihood that I would hire the people who prepared this report

(because of the depth and clarity of the analysis and presentation)

Maintain your final proposal on github (or in a GoogleDoc with history enabled).

When you are ready to submit it for grading:

- prepare your report (e.g. proposal_1d.txt or .pdf)

- put a standard submission prologue on the front of it

- up-load it for submission (only one person on the team needs to do this)

P1D.2 - Post-Mortem Analysis

This project is a learning exercise, and one of the major ways

we learn is by analyzing past experiences (positive and negative).

You will, as a team, review all aspects of this project. One of you will then

summarize that process into a post-mortem analysis report.

A report, summarizing the key issues raised in your post-mortem,

and the conclusions you came to. Your post-mortem discussion

should include:

- the concept definition, planning, research, and brainstorming activities

- the creation of the concept presentation

- the customer requirements elicitation planning, execution, and results analysis

- the organization and creation of the final proposal

- the planning and ongoing management of these activities

- the overall project as an educational exercise.

The submission and grading of Post Mortem reports is described

in the General Grading information.

Make sure that you have kept your meeting minutes and management plan up-to-date.

When you are ready to submit the Post-Mortem report (and management notes) for grading:

- prepare your report (ideally ASCII text in a file named postmortem_1.txt)

- put a standard submission prologue on the front of it

- make sure that it also contains copies or URLs for your meeting minutes and (updated) management plans

- up-load it for submission (only one person on the team needs to do this)

P1D.3 - Work Share Estimates

Most of your grade for this project will be based on the team deliverables, but some of it will

be based on the quality, amount, and timeliness of work done by each team member. Towards this

end, we ask each team member to estimate the

amount of work done by each team member on this project.

Please prepare your own assessment of how much work was done by whom and submit it as

workshare_1.txt (or perhaps workshare_1.csv).

Project Phase 2

Architecture and Review

Introduction

If something is simple, a well-trained person can just sit-down and do it.

But real-world problems often defy simple solutions,

and complex systems tend to be confronted by non-obvious challenges,

and to exhibit unanticipated behaviors. We would like to

simply sit down, start writing the parts that are obvious, and trust that

the rest will become clear to us as we move down that path. That story

has been told countless many times, and it almost always ends in tragedy.

Now that you have a well formulated idea of what your product should do, it

is time to figure out how such a product can be built.

This design must be elaborated and described well enough that outside reviewers can

evaluate it, assess its feasibility, and identify holes in your design.

This project will exercise a great many key design skills:

- coming up with a viable high level architecture

- working out the implications of your architecture to component definitions

- identifying and addressing questions and risks best addressed through research and/or prototypes

- validate your architecture against your requirements (and general goodness properties)

- describing that architecture well enough that others can understand it

- reviewing someone else's design, understanding the issues, and

articulating them in a useful form

- working the issues that are inevitably discovered to yield

an architecture that actually has a chance of working.

All of the above skills are fundamental to any significant software development effort.

There are multiple parts to this project, each of which has its

own goals, processes, and deliverables:

P2A.1 Plan

The development of the architecture may prove much more difficult

than you imagine, the process of describing it clearly in writing

may prove extremely challenging, and you will probably discover that

(after your design review) you have some non-trivial work

to do to fix your design. Also, remember that design is a wicked

problem:

- The architecture evolves with your evolving

understanding of the problem.

You may not be sure how many components you

have to specify and analyze until your

architecture is largely complete.

- You may not have any sense of how difficult

the component analyses will be, or how many

iterations you will have to make over the

architecture until you have gone through a

few rounds of revisions, and can judge the

difficulty of the issues and rate of convergence.

- You may not have any sense of the number

or difficulty of the issues that will be raised

in the design review until after it has been

completed. (If you had recognized those issues

you would have dealt with them before the review)

- Your design will almost surely be based on other libraries,

toolkits, or services. Unless you have already built

software based on these tools, you do not yet know how well

they will address your needs, or what constraints they will

impose on your design. You may find it necessary to build

(prototype) applications based on these services before

you can understand how to design your application.

In project 1, you were given a relatively complete list of things

you had to do, along with some guidance on how to do them.

In project 2

- the list of things you have to do depends on your

chosen project and approach to accomplishing it.

- you cannot know how much work these (design, research,

prototyping) tasks will be (or even if they will be

successful) until you start pursuing them.

These factors can cause the work to very quickly

grow much larger than your initial estimates.

Many students consider the development and description of the

preliminary architecture to be the most difficult part of the entire course.

Many final post mortem analyses express regret for not having adequately

investigated issues and options in project 2 that caused them considerable

difficulty in projects 3 and 4.

This means you need to get started as soon and fast as possible,

and budget a great deal of time for experimentation, revision and rework.

Each team will prepare a task-breakdown, identify the dependency relationships between

tasks, and owners for each sub-task, assign due-dates, and schedule regular reviews

of both work-products and progress (to enable adequate time to deal with the

problems that will arise).

A good management plan will include regular (e.g. daily) status checks, whose

results should be recorded in a minutes files and/or white-board snapshots.

As your understanding of the problem

evolves and you respond to unanticipated events,

you will have to revise your plan (not merely estimates, but

the work to be done).

If deadlines are missed, or deliverables fail to pass review, the fact, as well

as the causes and the plan to remedy them must be documented.

In Project 2B another team will review your P2A architecture. Trying to line

up reviewers at the last minute is extremely difficult and stressful.

You will find that things go much better if you have lined up which team will

review your architecture during this (P2A) phase.

Your initial Management Plan will be graded on the basis of:

- 20% good use of time and resources (work apportioned reasonably over the available time)

- 20% specifically calling out important research and/or prototyping activities

to gain required understanding and validate your proposed design

- 20% specificity of plan (clear responsibilities: who, what, when) including

lining up and preparation for reviews

- 20% provisions for early detection of problems, and time to deal with them

- 20% completeness

Maintain your plan (and status update minutes) in Google Docs.

You will probably be updating them daily,

and we will be reviewing this history.

When you are ready to submit your plan for grading:

- prepare your management plan (ASCII text, or an ASCII text

standard submission prologue

that refers to it) in a file named management_2a.txt)

- up-load it for submission (only one person on the team needs to do this)

P2A.2 Preliminary Architecture

You will design and specify a set of components that is (in combination)

capable of delivering your version 1.0 product functionality.

It is tempting to draw some high level pictures and a brief

set of descriptions and call it an architecture ...

but the devil is in the details.

The hard part is working out the implementation and

characteristics of the architecture

in sufficient detail to demonstrate that

the described system is buildable and likely to work:

- the described assembly of components is capable

of delivering the required functionality.

- each of the described components is

well defined and reasonably implementable.

- the described interfaces are reasonably abstracted

(understandable and convenient) from the clients'

perspective.

- the described interfaces are reasonably abstracted

(clear, implementable, with good information hiding)

from the component's perspective.

- the development can be accomplished with mature and usable tools

and technology (ideally using off-the-shelf components).

Please keep in mind that

a description of proposed screens/commands and the paths between them is not an architecture.

That is a proposal for a user interface and functionality.

An architecture describes the structure of the software

(e.g. programs or classes) that will implement that functionality,

and the interactions of those components with one-another and

external services or agents. For each component in your

architecture, you should be able to characterize its interfaces

(e.g. procedure calls, HTTP gets) and functionality (e.g. the methods

or operations it supports).

In Chapter 3.5 of Code Complete, McConnell provides an overview

(and check-list) for the things that you might want to cover in your

architectural description;

The most relevant (for this project) sections from his presentation are probably:

- Program Organization

- Major Classes

- Data Design

- Architectural Feasibility

- Buy-vs-Build and Reuse Decisions

- General Architectural Quality

To create your architecture, it may be helpful to:

- start with the environment in which it will run

(e.g. users, different computers, services with which you will interact).

- identify your key data items (e.g. persistent files, persistent databases,

active session data).

- note how the data flow from one place to another, and what processing

and/or transformations are performed on it.

- imagine and sketch components onto which that data and those transformations

can be projected.

- suggest a form (e.g. class, thread, process, server) for each component.

- characterize the inputs to, outputs from, requests, and information exchanges

between those components (files, data streams, calls, RPC requests, pub-sub events,

call-backs, etc).

You may find it helpful to record your component interfaces as class and

method declarations for all external entry points, and then implement

trivial mocks (that simply make calls to other components and

return simulated results of the appropriate types). Doing so may

enable you to build a toy version of your entire application, and

more clearly understand the components you have defined and the

flows of information and control between them.

It will also help to turn up misunderstandings about proposed

interfaces, and serve as a starting point for continuous integration.

Recall that McConnell described design as a

wicked problem, in that you may not even have a list of

problems to be solved until you have designed (and discarded)

a few solutions. This is a highly iterative process, and these

iterations may consume a great deal of your time.

Often, the best way to convince ourselves of the feasibility

of an architecture

is to sketch out possible implementations of each major

component, looking for options and issues. Such preliminary

research, design, analysis, and prototyping is an important

sanity check on the viability of the proposed architecture.

The goal of these investigations is not to fully design the

major components, but merely to present a compelling argument

for the "feasibility" of the proposed component descriptions:

- Where familiar off the shelf technology is available,

this may require no more than a brief description of its

capabilities and how it will be used.

- Where newer or unfamiliar technology

(e.g. langauge, API, tool kit or service) is chosen, it may be

necessary to do a further research (and/or prototyping) to

confirm its usability and suitability

for its proposed role in your project,

and to understand the constraints it may impose

on your design.

Teams often encounter disastrous design, implementation,

and integration problems because they assumed that

an approach or toolkit they read about would meet their needs.

- For new components that must be constructed from scratch,

it is common to construct a high level design ... with

enough detail to convince ourselves that such a component

is buildable, and unlikely to involve any "hard" problems.

Your architecture need not result in a complete top-down

design for the whole system. A component analysis only needs to be

complete enough to convince the reviewers that the major

likely problems have been identified, and are all likely to be solveable.

Every major component should be analyzed to this "feasibility" level.

Each member of the team should take ownership of the analysis

for at least one major architectural component. If you have many

more components than people, each person should choose at least

one interesting component for thorough analysis or one

interesting question to resolve through a prototyping effort.

If you have too many questions and components, it may be impossible

for you to do all of the necessary design and prototyping within a

few weeks. After each person has taken on a difficult component or

question, you can do a more superficial job on the simpler questions

and components (but note explicitly in each that you are doing so).

In the unlikely situation that you have more people than

interesting components,

sub-divide your major components to create more pieces or find a

problem that requires further analysis or prototyping.

NOTES ON INVESTIGATIONS

- If you intend to use new tools (with which you have no prior

experience) you do not know how easy they will be to use,

how well they will satisfy your needs, or what constraints

they will impose on your architecture. It would be prudent

for you to:

- down-loading the tool kit

- review the documentation and a few sample projects

- build a trivial version of your proposed product

(with trivial simulations of each component) to

ensure they will be able to talk to one-another

- Please be aware that if you gloss over such validation in this phase,

you may find that your architecture and design (that were based

on assumptions about how a new tool would work) impossible

to implement in the final weeks of the project.

A few hours of investigation in the next two weeks could easily

save you from many days of stress and rework in subsequent phases.

Architectural investigation, design and validation are critical prerequisites;

Treat them like a matter of life and death ...

because for a non-trivial software project, they often are.

- It is quite possible that you will not be able to complete all

of the required investigations and protyping in a single week.

- if this proves to be the case, it is a confirmation

of the signifcant risk associated with those issues,

and of the criticality of deeper investigation.

- you should define the problems to be investigated

as part of this submission, but those investigations

do not need to be completed until you submit your

final architecture.

Your architectural description should include:

This should be backed up by a set of component analyses

(research, designs, analyses, prototypes, etc) that were done to establish

the feasibility of implementing each major component:

- where existing technology is being used, provide references

and summarize the investigation you did to determine its

adequacy and usability.

- where analysis was done, provide a write-up of the issues

investigated, the means of the investigation, and results.

- where prototyping was done (e.g. to assess the usability

of a new tool or viability of a proposed approach), describe

the questions and risks to be addressed, a summary of the approach,

and the results

(and github URLs for the actual prototypes constructed).

- where new design work was done, provide design documentation

similar to (but hopefully much simpler than) that described

for the architectural description.

You may want to sketch out parts of the user interface

(e.g. screens, web pages, operations and navigational options)

to guide your definition of required functionality.

These may be a key foundation for the architecture that

will implement them, and valuable background for reviewers

of that architecture.

But these are not software architecture, and will not satisfy

the requirements for either the preliminary or final architecture.

Getting Started and Early Feedback

To help you through the most difficult parts of this process, we suggest

that you (within first two days):

- conceive a list of proposed software components.

- prepare a one-line description of what each

component does and its role in the product.

- draw a diagram showing their relationship to one-another and

the context in which they run and interact with the outside world.

- prepare a list of the issues (e.g. new technologies, available tools,

non-obvious APIs, difficult algorithms, etc)

that might arise in the implementation of each component.

- schedule a presentation with me (and/or a Grutor) to review

those component descriptions and concerns.

- get feedback on what else you might need to do before you start

researching tools, doing prototypes and elaborating designs.

Once you have a reasonable model of how your functionality can be

implemented and the questions/risks that must be examined you will

know what research, prototyping and design examinations you need to complete.

But if you do not have a reasonable starting point and list of issues

to address, you may wind up wasting a few weeks of work, much of which

winds up being thrown away, and does not enable you to move forward.

Describing an Architecture

There is a stereotype that scientists and engineers tend not to be good writers.

To the extent there is any truth in this sterotype, it is strange and tragic ...

because story-telling is fundamental to what all researchers and engineers do.

They may spend much of their time doing experiments, models, and designs, but

understandings of complex phenomena and systems are of little value until they

have been explained to others (who will apply and/or instantiate them).

Scientists and engineers do not (or at least should not, as part of those jobs)

write fiction;

But they do none-the-less create stories where characters,

with satisfying backgrounds, fulfill their roles,

and traverse their arcs,

in accordance with, and exemplifying compelling themes.

You understand the system you have designed;

Now you have to devise a story that will recreate that vision for others:

- who are the characters in your story, and what are their roles?

- what descriptions and naratives will most fully portray them?

- what key challenges do they face, and what principles/strategies guide them to success?

- what background is necessary to enable readers to absorb this story?

You will note that:

- there is much reading on what makes a good architecture

- there are two full lectures on developing and evaluating architectures

- you have (listed above) a two-digit number of things that

you need to describe about your architecture

It is suggested that you describe your architecture in multiple,

complementary ways:

- conceptual: what are the key (non-trival) problems to be

solved, and how (at a high level) do you propose to solve them?

- structural: what are the major data and computational components, and for each:

what kind of thing is it, and what are its contents/responsibilities?

- functional: what operations does each component support, with

what inputs and outputs?

- behavioral: what are the key operational scenarios, and what

actions (in each of the components) are involved in

implementing those scenarios?

But I do not give you forms, or even a table of contents for

your architectural description.

There are a few reasons for this:

- Every system is unique in both structure and constraints, and

its story probably requires a unique telling. Giving you

examples that were not congruent with your oen project

would probably do more harm than good ... as you tried to force

your square peg into that round hole.

- Among the (difficult and soft) skills we want you to develop

are

- learning to visualize complex systems

- developing the ability to communicate those visions in technical prose

The ability to describe complex technical concepts and issues is a

fundamental skill for any scientist or engineer,

and I expect that working out how to best

tell your story will prove to be a highly educational exercise.

- Figuring out the best way to tell the story is far more important

(and often more difficult) than telling it.

Once you have the right outline for your story,

drawing the pictures and writing the prose are often relatively straight forward.

But, if you review the

Garlan and Shaw paper on software architectures

you will see prose and graphical descriptions of many classical architectures.

For client-server models, you might find it interesting to look at simple

models of the

ORB client server binding and

Google File System client to data path and

Hadoop client to data path

file systems.

Recognize that your reviewers may not have read your initial concept and

competitive analysis, and that they may not be familiar with the technologies

you propose to use. Include, in your introductory sections, references to

your proposal and to overview documentation for the tools you propose to use.

Your preliminary architecture will be graded on the basis of

- 25% overview

- clarity of the conceptual roles of each components

(note user screens are not software component descriptions)

- clarity of their interactions and how the cooperate to provide

the required services,

- diagrams depicting component roles and relationships

- diagrams depicting external and inter-component interfaces

- how well this document prepares the reviewer(s) for the

specifications and issue discussions to follow.

- 15% component specifications

- completeness/clarity of functional descriptions

(of the proposed implementation)

- completeness/clarity of S/W interface descriptions

- completeness/clarity of S/W component requirements

- adequacy of this spec to guide future design and implementation

- 10% issue recognition and understanding

- completeness of the list (are there obvious issues missing)

- worthiness of these issues (are these real architectural issues)

- depth of the understanding of the issues

- insightfulness of propsed solutions

- 10% architectural rationale

- how well it captures the issues that drove or challenged the architecture

- how well it justifies the responses to those issues

- clarity and soundness of the analysis and rationale

- 10% component design analyses

- covers all non-trivial components (screen descriptions don't count)

- how clear it is that your component can be implemented

- how reasonable the proposed implementation is

- good use of off-the-shelf tools and existing technologies

- how clear it is that your implementation will work

- how clear it is that your component can meet its requirements

- quality of the analysis, how well the rationale was captured,

and value added by this analysis.

- 10% prototypes and practical investigations

- are all key risks identified and targeted for investigation

- does each investigation have clear and appropriate goals

- reasonablness of the planned investigation

- quality of the implementation of that plan

- quality of the investigation and value added by this work.

- 20% overall quality of the architecture

- clarity and simplicity

- is the architecture a reasonable response to the requirements

- does it exhibit good modularity and interface abstraction

- how effectively have the recognized issues been responded to

- is this architecture likely to be buildable

- is this architecture likely to work

Maintain your architecture description with history (e.g. on Github or in Google Docs).

When you are ready to submit your preliminary architecture for grading:

- prepare your architecture (ASCII text, or an ASCII text

standard submission prologue

that refers to it) in a file named architecture_2a.txt)

- up-load it for submission (only one person on the team needs to do this)

P2B Architectural Review

As we refine our design, we will attempt to see how it handles

the scenarios we have imagined as exercises of all of its major

(run-time and non-run-time) requirements.

But, it is natural for us to become prone to confirmation bias.

It is easy to become convinced that we have

made the right decision and that our design is therefore correct.

After we have done our best at refining and evaluating our architecture,

it is time to seek independent validation from people who haven't already bought-in

to our solution.

Each team will read over another team's architectural presentation,

prepare detailed notes, conduct a formal design review, produce a

written report, and work with the designing team to ensure reasonable

resolution of all outstanding issues. You will select a moderator,

who will work with the submitting team to ensure that the package

is ready for review before it is sent to the rest of your team for

review. Please document any discussions between your moderator and

the submitting team.

It is likely that the reviewing team may need a little bit of background

before they can fully review the proposed architecture. It is suggested

that, along with your preliminary architecture, you also send a copy of

your (P1D) final project proposal, and URLs for general background on

any tool kits or APIs you are proposing to use.

NOTE: for this project, it is the reviewing team that will be graded.

P2B.1 Review Notes (per person submissions)

Digesting other peoples' designs is another fundamental skill

for scientists and engineers.

Prior to your review meeting, each of you (individually) will read

the submitted architecture description and prepare detailed notes

on all questions and concerns. These notes must be submitted at

least 24 hours prior to the actual review session. They should be

neat notes, describing legitimate issues clearly enough to be sent

as email, and organized for discussion (e.g. in the recommended

review order). But these are notes, and there is no need for

polished prose, or even complete sentences.

Note that the primary purpose of the architectural review is to identify

issues with the proposed architecture, and you will be graded on your

ability to understand and explore the implications of the described

architecture.

But, you are also invited to make observations about the proposed

functionality and competitive positioning. While these are not

architectural, they may well be within scope for early stage

project reviews.

There are a few standard approaches to architectural reviews:

- Imagine scenarios to exercise the key requirements, and see

how well the proposed architecture seems able to handle them.

Following this path will force you to understand the details of

component relationships, functionality, and interfaces.

After considering the basic flows of information and control,

you might then consider obvious modes of failure, and the

ability of the specified components to reasonably detect and

handle each.

- After you believe you understand the components' responsibilities,

functionality, and interfaces, consider a list of general

architectural goodnesses (e.g. modularity, generality, testability,

maintainability, portability, etc) and how well this architecture

fares in each of these respects.

- Consider areas where are not yet confident of your understanding

or the rightness of the proposed solution, and think of questions

(to be asked during the review) that would better illuminate those

issues.

When you have completed your study notes in preparation for the review,

each individual should prefix them with a

standard submission prologue

and submit them (with the name review_notes_2b.txt).

NOTE: Late points cannot be used for this submission.

- Full credit if it is submitted 24 hours before the review meeting.

- 50% credit if it is submitted prior to the review meeting.

- 25% credit if it is submitted after the review meeting.

P2B.2 Review Meeting (graded for the revewing team)

Your team will conduct a formal design review of the submitted

architecture. The team that submitted that architecture will be

present to answer questions, but will have no other formal role

in the process.

Schedule your review meeting with the professor (or Grutor). Make sure the

submitting team can get you the architectural package far enough in advance

to enable you to do the required study and prepare your notes prior to the

review meeting.

P2B.3 Review Report (graded for the reviewing team)

The designated scribe for your review session will write up a report

of all conclusions reached in the design review. This is specifically

not "meeting minutes" (including all questions, answers, and discussion

points).

Rather it is a distillation of key issues and decisions.

It should be carefully written and reviewed. It must contain:

- a clear summary of each important issue rased

- a characterization as a defect, issue, or question

- a characterization as must-fix, should-fix, or comment

- a disposition for the entire project of

- approved

- approved with required changes

(which you are confident the team can make)

- requires another meeting

(the required changes are sufficiently great or complex

that the project should be re-reviewed after they have been made)

- rejected (this cannot be made to work)

Please note that must fix does not mean I feel strongly about this;

It means:

In our judgement, unless this problem is fixed the proposed product

cannot (reasonably) be built (or will not work)

Thus, unless it is obvious, it is often a good idea to accompany must fix

designations with a justification/rationale.

When you are ready to submit your review report for grading:

- prepare your report (ASCII text, or an ASCII text

standard submission prologue

that refers to it) in a file named report_2b.txt)

- up-load it for submission (only one person on the team needs to do this)

You should also send a copy to the team whos project your reviewed, so they can

start working on the required changes.

These submissions be graded on the basis of:

- 25% individual notes prepared in advance of review

- the thoroughness of study (i.e. understanding

of the proposed architecture and its implications)

to which they attest

- how clearly articulated (specific vs vague) the issues are

- all comments appropriate and within scope

- issues reasonably organized for discussion

- 50% the review process

- everyone is well-prepared and participating constructively

- agenda and flow

- meaningful discussions of in-scope issues

- all viewpoints heard, avoid rat-holes

- each discussion ends in a clear (explicitly stated) characterization

(defect, issue, question),

and disposition (must-fix, should-fix,

or comment/suggestion).

- ensure issues are understood, but do not try to resolve them

- all discusion is within scope

(primarily architectural, but some functional and positioning

issues may also be included)

- culminates in a clear (explicitly stated) project disposition

(approved, approved w/required changes,

requires another review or rejected).

- review the product critically but courteously

- review was a valuable and productive experience

- 25% the review report

- time, place, project, attendees

- clarity with which issues are presented

- explicit disposition of each issue

- completeness and accuracy

- scope of the report (just issues, no opinions)

- explicit disposition of the entire project

P2C.1 Revised Architectural Proposal

You will get considerable feedback on your initial architecture proposal

from the professor (or Grutor) and the team that reviews it. Based

on this feedback, you should revise your architecture to address all

of the must-fix issues, and as many as possible of the should-fix issues.

You will be graded on these resolutions, so make sure you document all

changes made and agreement from the reviewers.

Your final proposal is a new version of the original proposal,

revised based on the feedback from the graders and reviewers.

The grading criteria for the revised architectural proposal will be

similar to the initial architectural presentation, but with the

additional expectation that your rationale and issue discussions

will include issues raised in the reviews, and your design will

have responded to those suggestions:

- have all must-fix issues have been responded to

- quality of the responses to must-fix issues

- quality of the responses should-fix issues

Please make sure that your final architecture acknowledges this

feedback and explains how you have responded to each item.

The key questions we will ask when grading your final architectural proposal are:

- Have all significant design questions and risks been addressed?

- Does the team clearly understand the libraries/toolkits/services they will

be using and how to build software that uses them?

- Are the descriptions of all software components now sufficiently

complete that I could now sit down and start writing detailed

specs and designing each?

- Do I believe all of the software components to be buildable,

and that if they were built as described, the product would work?

Prepare a new architectural description (or revise your original description)

with history (e.g. on Github or in Google Docs).

When your team has addressed all issues and you are ready to submit your final architecture for grading:

- create a standard submission prologue

(that includes a URL to your architecture description), and

entitle it architecture_2c.txt

- up-load it for submission (only one person on the team needs to do this)

P2C.2 Post-Mortem Report

This project is a learning exercise, and one of the major ways

we learn is by analyzing past mistakes. You will, as a team,

review all aspects of this project. One of you will then

summarize that process into a post-mortem analysis report.

A report, summarizing the key issues raised in your post-mortem,

and the conclusions you came to. Your post-mortem discussion

should include:

- creation of the preliminary architecture (from initial

brain-storming through its evolution and refinement).

- component design analyses

- creating draft architectural description (trying to tell your story)

- studying for your design review

- the design review meeting (as a reviewer)

- the design review meeting (as a reviewee)

- writing up the review report and working the issues

- revising your architecture to address the issues

- the planning and ongoing management of these activities

- the overall project as an educational exercise

The submission and grading of Post Mortem reports is described

in the General Grading information.

Make sure that you have kept your meeting minutes and management plan up-to-date.

When you are ready to submit the Post-Mortem report (and management notes) for grading:

- prepare your report (ASCII text, or an ASCII text

standard submission prologue

that refers to it) in a file named postmortem_2.txt)

- make sure that it also contains URLs for your meeting minutes and management plans

- up-load it for submission (only one person on the team needs to do this)

P2C.3 - Work Share Estimates

Most of your grade for this project will be based on the team deliverables, but some of it will

be based on the quality, amount, and timeliness of work done by each team member. Towards this

end, we ask each team member to estimate the

amount of work done by each team member on this project.

Please prepare your own assessment of how much work was done by whom and submit it as

workshare_2.txt (or perhaps workshare_2.csv).

Project Phase 3

Specifications, Design and Review

Introduction

Thus far, most of your work has been team activities. Moving forward,

you will do much of your work as individuals, tho still with considerable

coordination, collaboration, and assistance from other team members.

Even though much of the work in the next two phases will be individual

it is important that you continue to work as a team:

- your design and implementation decisions may differ from the

understandings that others have of what your component will do.

- changes to or misunderstandings about component specifications

will create significant problems when you integrate your

components together.

The architecture has described the roles, functionality, and interfaces of each of

the key components in our system. A few components may be simple enough

that we can simply sit down, code them up, and watch them work ... but most

components are more complex than that. Before we start coding a non-trivial

component we need to make sure that:

- we understand (completely and in detail) what our component will do.

- we understand how we will implement those things.

- we understand how we will test the correctness of that implementation.

- we have not made any obvious mistakes in our plans for the above.

In this project, we will complete our implementation prerequisites.

In this phase you will create specifications, designs and testing plans

for chosen components.

In the next (and final) phase you will execute these plans, building,

testing, integrating, and demonstrating working software.