Named Segments vs. Logical Addressing

In the last lesson, we introduced the concept of segmentation and mentioned how it was implemented on the x86 CPU, with a small number of named segments (CS, DS, SS, ES, FS, and GS), and special prefixes in instructions to override the default segment registers.

Let's see why this might not be such a great idea.

Example: Segment-Aware memcpy

Suppose we want to provide a memcpy function that can copy data from one segment to another. Because addresses don't intrinsically include information about which segment they're in, we need to pass the segment information as additional arguments to the function, so the interface looks like

enum segment_dest_t {

SEG_DS = 0,

SEG_ES = 1

};

void seg_memcpy(void *src, void *dest, size_t size,

enum segment_dest_t src_seg, enum segment_dest_t dest_seg);

and calling the function from C code would look like

seg_memcpy(food, plate, meal_size, SEG_DS, SEG_ES);

Because the C language doesn't have built-in support for segment specifiers, we have to write the function in assembly language. Here's the code:

# Segment-aware memcopy function

# Arguments:

# 4(%esp) - source address

# 8(%esp) - destination address

# 12(%esp) - size

# 16(%esp) - source segment (0 for DS, 1 for ES)

# 20(%esp) - dest segment (0 for DS, 1 for ES)

.text

.globl seg_memcpy

seg_memcpy:

pushl %ebp

movl %esp, %ebp

pushl %esi

pushl %edi

pushl %ecx

movl 8(%ebp), %esi # source address

movl 12(%ebp), %edi # destination address

movl 16(%ebp), %ecx # size

# Check source and destination segments

movl 20(%ebp), %eax # source segment

orl 24(%ebp), %eax # combine with dest segment

jmp *jump_table(,%eax,4)

jump_table:

.long copy_ds_ds

.long copy_es_ds

.long copy_ds_es

.long copy_es_es

copy_ds_ds:

# Both source and destination use DS (default)

rep movsb

jmp done

copy_es_ds:

# Source uses ES, destination uses DS

.loop_es_ds:

movb %es:(%esi), %al

movb %al, (%edi)

incl %esi

incl %edi

decl %ecx

jnz .loop_es_ds

jmp done

copy_ds_es:

# Source uses DS, destination uses ES

.loop_ds_es:

movb (%esi), %al

movb %al, %es:(%edi)

incl %esi

incl %edi

decl %ecx

jnz .loop_ds_es

jmp done

copy_es_es:

# Both source and destination use ES

.loop_es_es:

movb %es:(%esi), %al

movb %al, %es:(%edi)

incl %esi

incl %edi

decl %ecx

jnz .loop_es_es

done:

popl %ecx

popl %edi

popl %esi

popl %ebp

ret

Gah! I don't speak assembly!

We're not asking you to write assembly, just look over it and get a high-level understanding of what it's doing. We'll outline the important aspects below.

The assembly code contains four copy loops:

copy_ds_ds: Both source and destination use theDSsegment.copy_es_ds: Source uses theESsegment, destination uses theDSsegment—the%es:(%esi)syntax creates a machine instruction that uses theESsegment for the source address.copy_ds_es: Source uses theDSsegment, destination uses theESsegment—the%es:(%edi)syntax creates a machine instruction that uses theESsegment for the destination address.copy_es_es: Both source and destination use theESsegment.

Based on the values passed in for the source and destination segments, the code jumps to the appropriate copy loop.

Okay, so I see that it's got four loops and it'd be 36 loops if we wanted to support all six segment registers (6 × 6 = 36). But I'm not sure what the fundamental design error is. I mean, it seems like it's working, right?

I think I see it. The fundamental design error is that the segment specification is in the code—the instructions—not in the data. If the segment was part of the address, we wouldn't need all these loops.

Whoa! How could the segment be part of the address?

Imagining a Different World

Let's head back in time to 1981. Intel is designing the 80286, set to launch in 1982. It's going to have 32-bit addressing, a massive leap forward compared to the 16-bit addressing of the 8086. There are lots of things they're going to change to support 32-bit addressing, one of which is considering how to handle segments. The original CPU has four named segments (CS, DS, SS, and ES), and it seems like a good idea to have some more, which is what they did. But let's imagine they had made some different decisions about segments.

Suppose they'd decided to have eight segments. But instead of naming them, they just numbered them and made them part of the logical address. Specifically, they used the top 3 bits of the address to specify the segment, and the remaining 29 bits to specify the offset within the segment so that every address intrinsically says what segment it belongs to.

Our hardware now looks like

29 bits isn't much. That's only 512 MB per segment.

Remember, we're in 1982. At that time 512 MB was a massive amount of memory. Physically, the 80286 could only address 16 MB of RAM, so it wasn't going to be a worry for a while.

Okay, but they're kicking the can down the road. That check is going to come due eventually.

Let's think about that…

512 MB Isn't Enough for Everyone

Let's suppose that some people in the press begin to suggest that soon 512 MB won't be enough memory given how fast the field is advancing, and speculate about some programs that might need a full gigabyte of data. Is our (imagined) design going to be able to handle that requirement?

I see! If we use two adjacent segments, we can access 800 MB of contiguous memory. The CPU will automatically switch to the other segment when we cross the boundary.

Wait, why? Do we need some extra trick to make that happen?

No, it's just the way binary arithmetic works.

Let's imagine we use segments four and five to hold our data, with segment four holding its maximum 512 MB and segment five holding the remaining 288 MB. Here's how we'd divide up the memory:

| Where | Logical Address | Segment | Offset |

|---|---|---|---|

| Start of segment four | 10000000000000000000000000000000 |

4 | 0x00000000 |

| End of segment four | 10011111111111111111111111111111 |

4 | 0x1fffffff |

| Start of segment five | 10100000000000000000000000000000 |

5 | 0x00000000 |

| End of segment five | 10110001111111111111111111111111 |

5 | 0x11ffffff |

Notice that segment five starts immediately after segment four ends, and thus we have a contiguous range of logical addresses from 10000000000000000000000000000000 to 10110001111111111111111111111111, which is 800 MB.

So it doesn't matter if segments are small. If they all stand together, they can be as big as needed. It's like a metaphor for life.

Yes.

If the designers were willing to have a bigger table of base and limit registers we could have other designs, such as

- 8 bits for segment number and 24 bits for offset, giving 256 segments of 16 MB each.

- 16 bits for segment number and 16 bits for offset, giving 64K segments of 64 KB each.

- 20 bits for segment number and 12 bits for offset, giving over a million segments of 4 KB each.

All of these would work, using the adjacent-segments trick to get contiguous memory. It doesn't matter how small they are if we can just put them together next to each other.

Whoa! That last one is a lot of segments. I don't think anyone was going to do that on a chip in the mid 1980s. And why would we need a million segments anyway?

And they wouldn't correspond to conceptual memory regions, like the code, the heap, the stack, and so on.

When we put a bunch of segments together to make a bigger memory area, most of the segments will be FULL, with only the one at the very end of a chain of adjacent segments being partially used.

Meh, so it's hardly even worth having the limit registers. Throw them away and call it good.

Actually, that's a good idea.

What? Why?

Tiny Fixed-Size Segments; Farewell to Limit Registers

If our segments were really small, like 4K, we could just have every segment be at its maximum size and not need limit registers at all. It also means that every allocation of physical memory for a segment will be the same size, 4K. If all allocations are the same size, we don't have to worry about external fragmentation, because every hole will be a multiple of 4K.

And internal fragmentation will be modest. For any area of memory we're chaining segments together to make, it'll just average out to half a segment per chain, or 2K.

Of course, whereas before our segments coresponded to conceptual regions of a process's memory (e.g., the code, the stack, the heap, etc.), now they don't. They're now little pieces that together get chained together to make those conceptual regions. So perhaps we shouldn't call them segments anymore. Let's call them pages.

Whoa! I remember pages from CS 105. I never thought this was where we'd end up.

Mind blown!! 🤯

It's cool that it's like a spectrum from one thing to the other, but I'm a bit concerned. Sure, we got rid of the limit registers, but we've still got like a million base addresses to keep track of. And that's on a 32-bit system. With 64-bits, we're talking four quadrillion base addresses. That's way too many.

Meh. Just give up then.

Actually, yes. We won't store all the information about where every single page is inside the CPU. We'll keep it in memory in some kind of data structure, which we'll call a page table. And that's what we'll discuss on the next lesson page.

Meh. Gotta be better than 80286 fantasy land.

Deeper Dive: PDP-11 Segmentation — Orgin of the Segfault

The earliest version of Unix ran on the PDP-7, which didn't have any kind of memory-protection support. The approach the designers used was mentioned last class—a single process in memory at a time with swapping to allow multiple processes to run. That version was a very preliminary prototype, where running the game Space Travel was perhaps the most important design consideration.

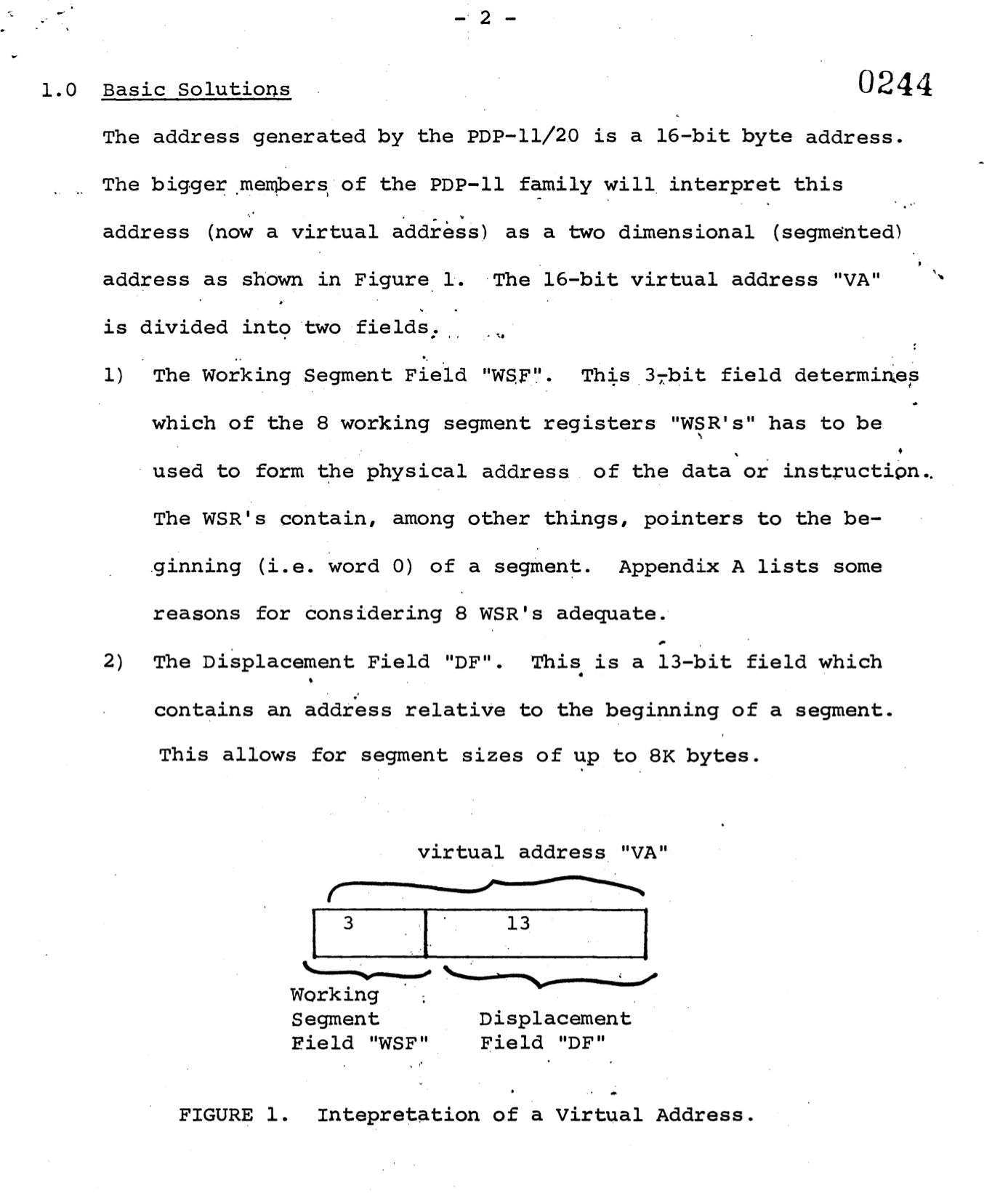

The first real version of Unix (First Edition Unix, a.k.a. V1), debuted in 1971 on the PDP-11, which added a memory-management unit. In fact, it used segmentation, and used the top three bits of the logical address to specify the segment, exactly like our fantasy 80286. Here's an image from the design document for the PDP-11/20 that describes how it worked:

Whoa! The PDP-11 logical addresses were only 16 bits. That's not much.

Yet Unix managed to run on that.

Because the PDP-11 provided memory protection via segmentation, it made sense to call a memory-access error when an address was outside of any valid segment—a segmentation fault or segmentation violation. This term has stuck around, even though modern systems don't use segmentation in the same way.

OMG! I had no idea that's where the term segfault came from.

Meh. What I've learned is that when Intel came out with the 80286 in 1982, the PDP-11 had been out for over a decade, doing segmentation the right way. Yet Intel went ahead and did it the wrong way. Classic Intel.

(When logged in, completion status appears here.)