More History of Operating Systems

I want to know what happened next! So far it's all been about these big mainframes and minicomputers, but what about the computers I know and love today?

Well, let's see what happened next.

The Microprocessor Revolution (1970s)

With the rise of timesharing, mutliple users accessing a single (large) computer became possible, but how were all those users going to connect? As we mentioned earlier, one option was to use teletype machines, but they were loud, clunky, and perhaps even then felt like something out of a previous era. It would be much more modern to have a “glass teletype”—a screen showing the text that would have previously appeared on paper. But how do you make such a thing? It would need to have memory for the text it shows, and enough smarts to display text (and maybe advanced features like putting text on specific parts of the screen). What could do this? A computer, of course! But not a real, fancy, big computer—that's the expensive thing we're all trying to share. No, we want a small, simple, cheap computer, just enough to drive the screen. And so a number of companies set out to make these glass teletypes, trying to do so for as little money (and computing hardware) as possible.

One company, the Computer Terminal Corporation (CTC), wanted to make a better glass teletype. One of their engineers, Victor Poor, designed a simple but flexible processor to be the backbone of the machine. With the rise of integrated circuits, and a desire to cut costs, they looked to a company that had made a name for itself designing chips for calculators: Intel. CTC had a complete design; Intel just needed to manufacture the chip. But Intel wasn't very interested in making processor chips; they thought each computer would need just one processor, but many memory chips. So CTC agreed to pay Intel $50,000 to make the chip for them.

Unfortunately, the chip took longer than expected to make it to production, but CTC had a backup plan—they could make the processor for their terminal the old fashioned way, with discrete logic chips. By the time Intel came through with their single-chip processor, it turned out to run slower than the discrete-logic board CTC had made themselves. So they stuck with that solution. CTC didn't want to pay Intel for a chip they weren't even going to use, and Intel was starting to see the potential of making processors. So they made a deal: they'd go their separate ways, but Intel would have the rights to the processor architecture CTC had designed, an could sell the chip to anyone as the “Intel 8008”. This architecture would become the basis for the 8080, which would in turn be the seed for the x86 architecture that powers many computers today (and also the Z80, an 8-bit CPU that was used in many early personal computers and became popular in embedded systems).

Although originally intended as embedded CPUs for specific applications (like calculators or glass teletypes), people quickly realized that these CPUs could power general-purpose computers in their own right. And, because they were (relatively) cheap, they could be used in computers that sold for hundreds of dollars rather than hundreds of thousands of dollars.

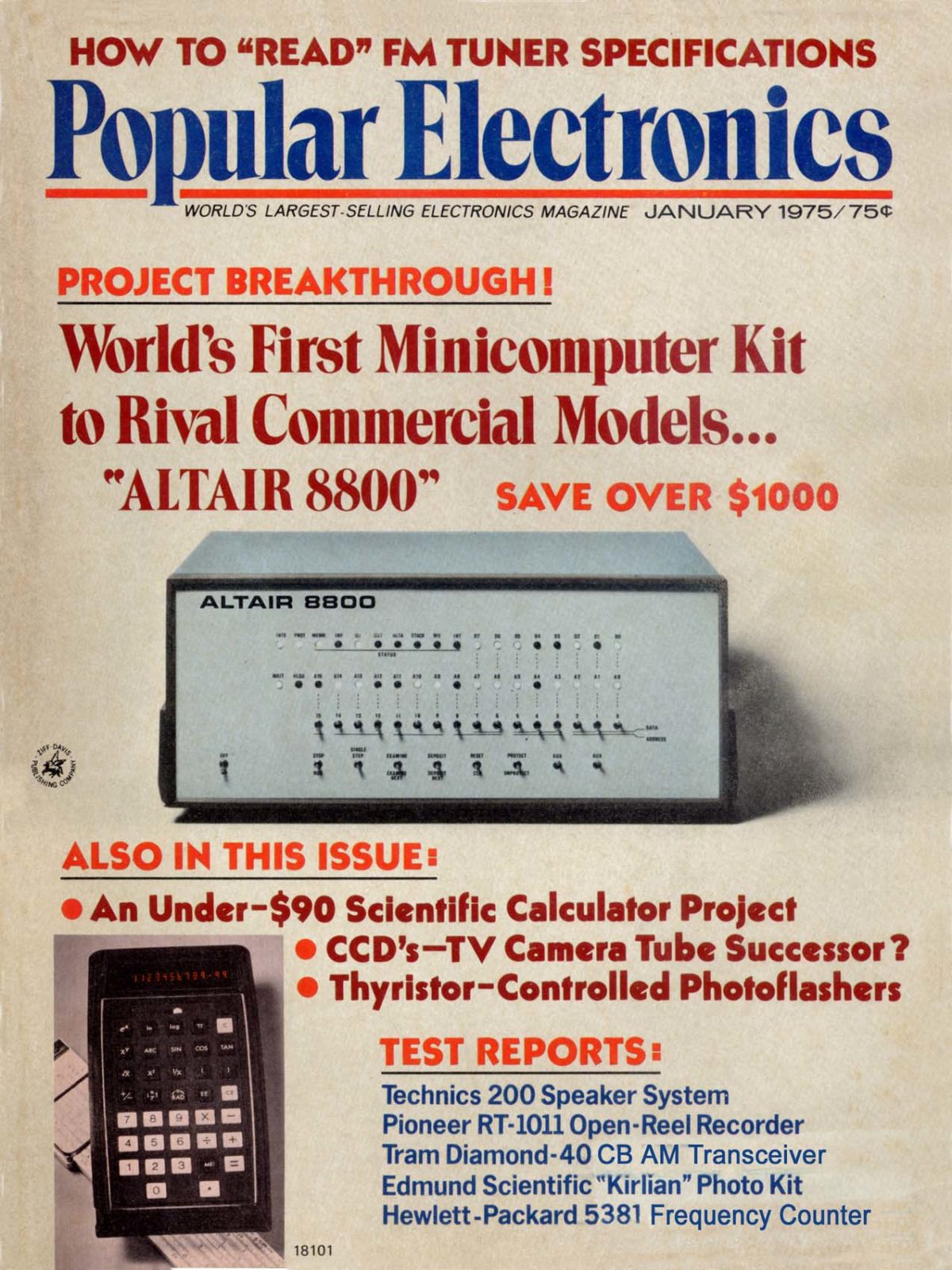

Image credit: Popular Electronics, January 1975

The Altair 8800 (shown above), had no keyboard or monitor. Instead, you programmed it by flipping switches to set the bits of the program you wanted to run, and then hit a button to run the program. The output was displayed by a series of lights that showed the value of the bits in the computer's memory. Like the earliest computers, it didn't really have an operating system, you just ran programs directly on the hardware.

Personal Computer OS: Back to Basics (early 1980s)

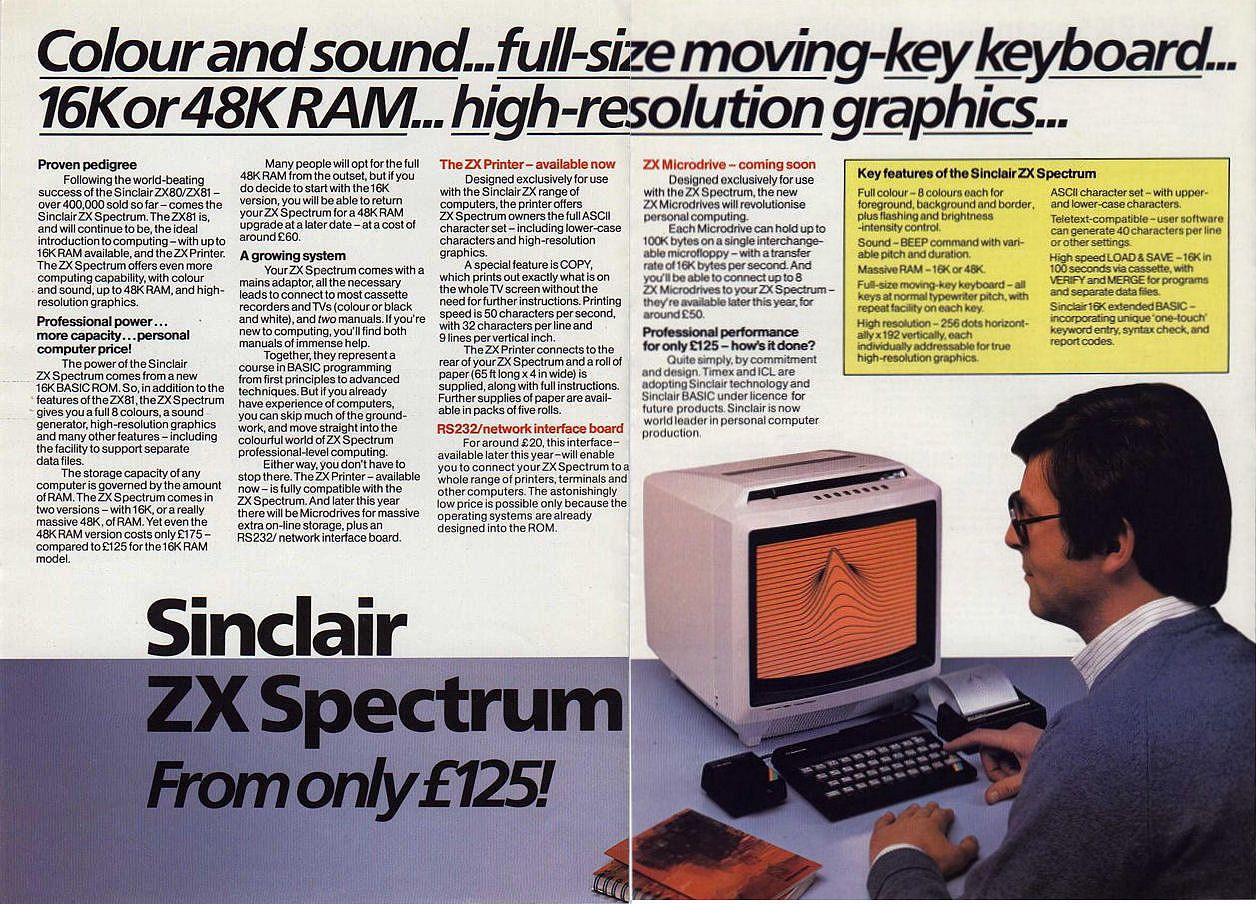

Image credit: Sinclair Research Ltd.

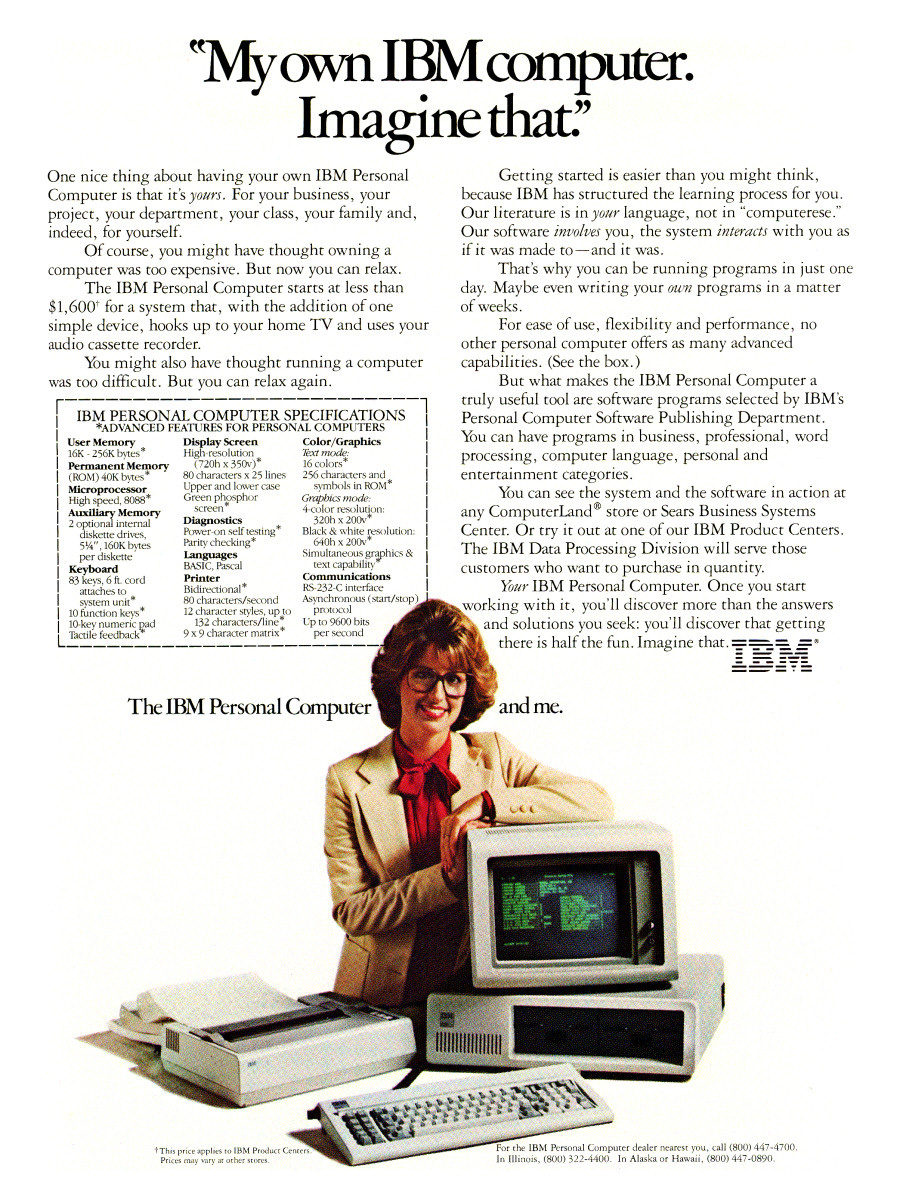

Image credit: IBM via Ars Technica

Quickly enough, personal computers appeared with keyboard and screens. Some of the cheaper models could repurpose the family TV set as the monitor. These computers were simple, and so were their operating systems. They were designed to run one program at a time, and the processors were too simple to support user mode and kernel mode, memory protection, or other advanced features that “serious” operating systems required.

Thus, early PC operating systems like MS-DOS were reminiscent of earlier, simpler systems:

- Command-line interfaces

- Limited multitasking capabilities

- No memory or I/O device protection

However, these systems made computing accessible to a much wider audience, starting the cycle of OS evolution anew.

PC OS Evolution: Increasing Complexity (Mid-1980s to 1990s)

As chip fabrication technology improved, microprocessors became more powerful and capable, adopting capabilities that had previously been the domain of mainframes and minicomputers.

With these advances, operating systems evolved to include

- Sophisticated file management systems

- True multitasking capabilities

- Improved memory management and protection

- More user-friendly interfaces

This era saw PCs transition from simple, single-task machines to complex, multi-purpose devices, mirroring the evolution of mainframe operating systems decades earlier.

Graphical User Interfaces (Late 1980s–Early 1990s)

The widespread adoption of GUIs marked another significant leap in OS complexity:

- Intuitive, visual interfaces became the norm

- Window management systems were introduced

- Event-driven programming models emerged

Systems like Windows 3.0/3.1, MacOS, and AmigaOS brought these advanced interfaces to the masses, fundamentally changing user interactions with computers.

In some systems, the GUI was built on top of an existing text-based OS and was in some ways just “another program” that the core operating system was running (and could not run if desired)—both Linux and modern macOS fall into this camp, as did Windows before Windows NT. In others, the GUI was more deeply integrated into the operating system—Windows NT and modern Windows fall into this camp, as well as the original (pre–Mac OS X) MacOS.

Networked and Distributed Systems (1990s)

The 1990s saw operating systems embrace networking and the internet:

- Integration of TCP/IP stacks and web browsers

- Development of distributed operating systems

- Introduction of network operating systems for resource sharing across multiple computers

This era marked a shift from standalone systems to interconnected computing environments.

Whether the web browser is “an integral part of the operating system” or just an ordinary program that users can run is less of a technical question and more of a business and legal question for tech companies. Both Microsoft and Apple have at different times claimed that their browser needed to be considered part of the operating system, and then came under legal scrutiny for their claims, and were forced to walk those claims back.

So in some sense, the boundaries of what is and isn't part of the operating system are fuzzy?

Indeed.

Mobile OS: Starting Simple, Growing Complex (2000s–Present)

The rise of smartphones initiated another cycle of OS evolution:

- Early mobile OS's (Palm OS, Symbian) were relatively simple

- Modern mobile OS's (iOS, Android) quickly grew to offer

- Sophisticated multitasking

- Advanced graphics capabilities

- Complex app ecosystems

- Efficient power management

This progression mirrors the evolution seen in personal computers, demonstrating how history repeats itself in technology.

Cloud and Virtualization (2010s–Present)

The latest chapter in OS history revolves around cloud computing and virtualization.

- Multiple operating systems running on a single physical machine

- Single OS spanning multiple machines

- Container technologies providing lightweight virtualization

These may seem like advances, but virtualization dates back to the 1960s, and when we think about a REST API in the cloud, we're handing our data and desired program behavior over to an “operator” who runs it and gives us back the results. In some ways this model is a lot like batch programming from the 1960s.

Meh. So nothing ever changes? It's all stagnant?

No. If you look back 100 years, you'd see both electric cars and gasoline cars, and the fundamentals of each hasn't changed much. But todays cars, while operating according to the same principles as cars from 100 years ago, show considerable refinement and sophistication over those early models. The same is true of operating systems. The fundamentals of what an operating system does hasn't changed much, but the sophistication and complexity of modern operating systems is far beyond what was possible in the past.

At least that means that what I learn in this class won't go out of date too quickly.

Indeed!

(When logged in, completion status appears here.)