Progress

As of 5/6

The project is done. As it turned out, we were overly optimistic in

attempting to get multiple robots doing cooperative mapping. We got a

single robot to map out its environment, using FASTSLAM. The

following webpages detail the end results of the project: Victoria's landmark detection code, Paul's FASTSLAM code, Steph's wandering and control code.

Videos of the robot in action are here and here.

Victoria has also completed the picobot rules, solving map 0, map 1,map 2. Victoria left the solutions to the path planning

problem outside Prof. Dodd's door Friday evening.

As of 4/21

Steph has updated the wandering client

to use the new mapping and vision code, as well as making several other

adjustments.

As of 4/13

Paul has changed our maptool code so that it understands landmarks. Victoria has one

copy of the vision tool which identifies

artificial landmarks and estimates their size, and one copy of the

vision tool which plays Set!

As of 4/8

We have a shiny new

master plan for the semester of what we want to get done.

As of 3/25

The MCL code is now working fairly well.

In addition, we have a plan for the next

two weeks, focusing on tidying up loose ends from the first part of

the semester, and preparing for coordinated mapping using wireless.

As of 3/10

We have an implementation of Monte Carlo

Localization (MCL) mostly working. However, Paul is out of town at

a conference, so it's not clear if we will be able to finish in time.

Week of 3/6

This week was spent getting wandering working properly. Steph

developed a guided client for moving the robot under manual control (code here), and a wandering client which follows the left

hand wall.

Week of 2/27

To start off this week's work, Victoria mounted the sonar unit Prof. Dodds

created for us on our robot. Then, Paul and Steph started writing a client to control the robot

so it could wander the halls. Unfortunately, Strider, Steph's

laptop, refuses to talk to both the sonar unit and the robot at the

same time, so our wandering behavior has not yet been tested.

Week of 2/20

For this week, we've completed two separate tasks: editing the image processing software Prof. Dodds

gave us to detect red moldings , and writing a remote control

client for our robot, so we can more easily control it while it roams

the hallways.

Week of 2/7

This week, we completed two separate tasks: soldering and connecting up a servo motor, and testing the accuracy of the robot's odometry.

Week of 1/31

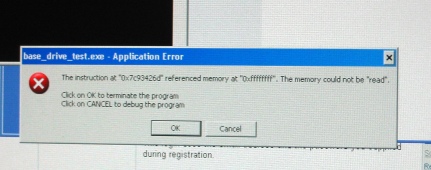

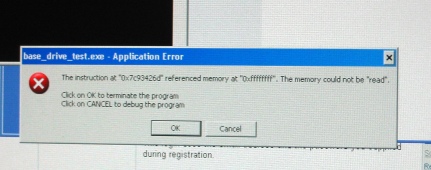

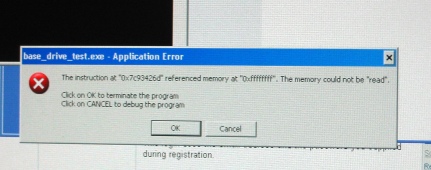

At present, we have two robots, unimaginatively named One and Two.

Paul built Robot One and Steph built Robot Two. We tried

to make the robots go places, but instead, we got an error!